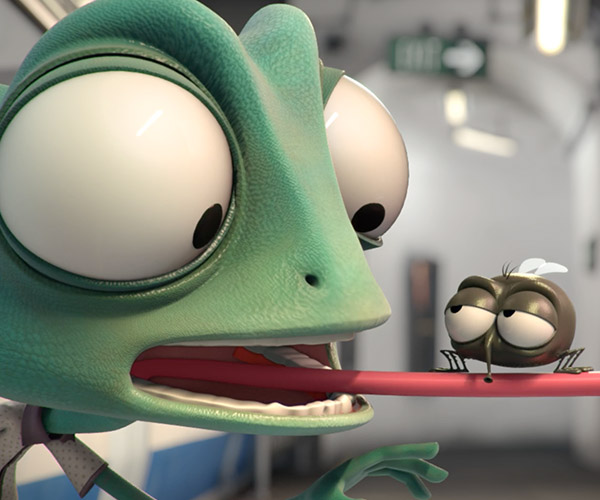

A chameleon spies his crush while waiting for the subway, and aims to impress her with a gift. But Darrel doesn’t exactly have the best of luck in this amusing and award-winning animated short film out of Spain, directed by Marc Briones and Alan Carabantes.

Highlights From Former Rep. Chris Cox’s Amicus Brief Explaining The History And Policy Behind Section 230

The Copia Institute was not the only party to file an amicus brief in support of Airbnb and Homeaway’s Ninth Circuit appeal of a district court decision denying them Section 230 protection. For instance, a number of Internet platforms, including those like Glassdoor, which hosts specialized user expression, and those like eBay, which hosts transactional user expression, filed one pointing out how a ruling denying Airbnb and Homeaway would effectively deny it to far more platforms hosting far more kinds of user speech than just those platforms behind the instant appeal.

And then there was this brief, submitted on behalf of former Congressman Chris Cox, who, with then-Representative Ron Wyden, had been instrumental in getting Section 230 on the books in the first place. With this brief the Court does not need to guess whether Congress intended for Section 230 to apply to platforms like Airbnb and Homeaway; the statute’s author confirms that it did, and why.

In giving insight into the statutory history of Section 230 the brief addresses the two main issues raised by the Airbnb appeal – issues that are continuing to come up over and over again in Section 230-related litigation in state and federal courts all over the country: does Section 230 apply to platforms intermediating transactional user expression, and does Section 230’s pre-emption language preclude efforts by state and local authorities to hold these platforms liable for intermediating the consummation of the transactional speech. Cox’s brief describes how Congress intended both these questions to be answered in the affirmative and thus may be relevant to these other cases. With that in mind, we are archiving – and summarizing – the brief here.

To illustrate why Section 230 should apply in these situations, first the brief explains the historical context that prompted the statute in the first place:

In 1995, on a flight from California to Washington, DC during a regular session of Congress, Representative Cox read a Wall Street Journal article about a New York Superior Court case that troubled him deeply. The case involved a bulletin board post on the Prodigy web service by an unknown user. The post said disparaging things about an investment bank. The bank filed suit for libel but couldn’t locate the individual who wrote the post. So instead, the bank sought damages from Prodigy, the site that hosted the bulletin board.

[page 3]

The Stratton Oakmont v. Prodigy decision alarmed Cox for several reasons. One, it represented a worrying change in judicial attitudes towards third party liability:

Up until then, the courts had not permitted such claims for third party liability. In 1991, a federal district court in New York held that CompuServe was not liable in circumstances like the Prodigy case. The court reasoned that CompuServe “ha[d] no opportunity to review [the] contents” of the publication at issue before it was uploaded “into CompuServe’s computer banks,” and therefore was not subject to publisher liability for the third party content."

[page 3-4]

It had also resulted in a damage award of $200 million dollars against Prodigy. [page 4]. Damage awards like these can wipe technologies off the map. If platforms had to fear the crippling effect that even one such award, arising from just one user, could have on their developing online services, it would dissuade them from being platforms at all. As the brief observes:

The accretion of burdens would be especially harmful to smaller websites. Future startups, facing massive exposure to potential liability if they do not monitor user content and take responsibility for third parties’ legal compliance, would encounter significant obstacles to capital formation. Not unreasonably, some might abjure any business model reliant on third-party content. [page 26]

Then there was also a third, related concern: according to the logic of Stratton Oakmont, which had distinguished itself from the earlier Cubby v. Compuserve case, unlike Compuserve, Prodigy had "sought to impose general rules of civility on its message boards and in its forums." [page 4].

The perverse incentive this case established was clear: Internet platforms should avoid even modest efforts to police their sites. [page 4]

The essential math was stark: Congress was worried about what was going on the Internet. It wanted platforms to be an ally in policing it. But without protection for platforms, they wouldn’t be. They couldn’t be. So Cox joined with Senator Wyden to craft a bill that would trump the Stratton Oakmont holding. The result was the Internet Freedom and Family Empowerment Act, H.R. 1978, 104 Cong. (1995), which, by a 420-4 vote reflecting significant bipartisan support, became an amendment to the Communications Decency Act – Congress’s attempt to address the less desirable material on the Internet – which then came into force as part of the Telecommunications Act of 1996. [page 5-6]. The Supreme Court later gutted the indecency provisions of the CDA in Reno v. ACLU, but the parts of the CDA at Section 230 have stood the test of time. [page 6 note 2].

The statutory language provided necessary relief to platforms in two important ways. First, it included a "Good Samaritan" provision, meaning that "[i]f an Internet platform does review some of the content and restricts it because it is obscene or otherwise objectionable, then the platform does not thereby assume a duty to monitor all content." [page 6]. Because keeping platforms from having to monitor was the critical purpose of the statute:

All of the unique benefits the Internet provides are dependent upon platforms being able to facilitate communication among vast numbers of people without being required to review those communications individually. [page 12]

The concerns were practical. As other members of Congress noted at the time, "There is no way that any of that any of those entities, like Prodigy, can take the responsibility [for all of the] information that is going to be coming in to them from all manner of sources.” [page 14]

While the volume of users [back when Section 230 was passed] was only in the millions, not the billions as today, it was evident to almost every user of the Web even then that no group of human beings would ever be able to keep pace with the growth of user-generated content on the Web. For the Internet to function to its potential, Internet platforms could not be expected to monitor content created by website users. [page 2]

Thus Section 230 established a new rule expressly designed to spare platforms from having to attempt this impossible task in order to survive:

The rule established in the bill […] was crystal clear: the law will recognize that it would be unreasonable to require Internet platforms to monitor content created by website users. Correlatively, the law will impose full responsibility on the website users to comply with all laws, both civil and criminal, in connection with their user-generated content. [But i]t will not shift that responsibility to Internet platforms, because doing so would directly interfere with the essential functioning of the Internet. [page 5]

That concern for the essential functioning of the Internet also explains why Section 230 was not drawn narrowly. If Congress had only been interested in protecting platforms from liability for potentially defamatory speech (as was at issue in the Stratton Oakmont case) it could have written a law that only accomplished that end. But Section 230’s language was purposefully more expansive. If it were not more expansive, while platforms would not have to monitor all the content it intermediated for defamation, they would still have to monitor it for everything else, and thus nothing would have been accomplished with this law:

The inevitable consequence of attaching platform liability to user-generated content is to force intermediaries to monitor everything posted on their sites. Congress understood that liability-driven monitoring would slow traffic on the Internet, discourage the development of Internet platforms based on third party content, and chill third-party speech as intermediaries attempt to avoid liability. Congress enacted Section 230 because the requirement to monitor and review user-generated content would degrade the vibrant online forum for speech and for e-commerce that Congress wished to embrace. [page 15]

Which returns to why Section 230 was intended to apply to transactional platforms. Congress didn’t want to be selective about which types of platforms could benefit from liability protection. It wanted them all to:

[T]he very purpose of Section 230 was to obliterate any legal distinction between the CompuServe model (which lacked the e-commerce features of Prodigy and the then-emergent AOL) and more dynamically interactive platforms. … Congress intended to “promote the continued development of the Internet and other interactive computer services” and “preserve the vibrant and competitive free market” that the Internet had unleashed. Forcing web sites to a Compuserve or Craigslist model would be the antithesis of the congressional purpose to “encourage open, robust, and creative use of the internet” and the continued “development of e-commerce.” Instead, it will slow commerce on the Internet, increase costs for websites and consumers, and restrict the development of platform marketplaces. This is just what Congress hoped to avoid through Section 230. [page 23-24]

And it wanted them all to be protected everywhere because Congress also recognized that they needed to be protected everywhere in order to be protected at all:

A website […] is immediately and uninterruptedly exposed to billions of Internet users in every U.S. jurisdiction and around the planet. This makes Internet commerce uniquely vulnerable to regulatory burdens in thousands of jurisdictions. So too does the fact that the Internet is utterly indifferent to state borders. These characteristics of the Internet, Congress recognized, would subject this quintessentially interstate commerce to a confusing and burdensome patchwork of regulations by thousands of state, county, and municipal jurisdictions, unless federal policy remedied the situation. [page 27]

Congress anticipated that states and local authorities would be tempted to impose liability on platforms, and in doing so interfere with the operation of the Internet by forcing platforms to monitor after all and thus cripple their operation:

Other state, county, and local governments would no doubt find that fining websites for their users’ infractions is more convenient than fining each individual who violates local laws. Given the unlimited geographic range of the Internet, unbounded by state or local jurisdiction, the aggregate burden on an individual web platform would be multiplied exponentially. While one monitoring requirement in one city may seem a tractable compliance burden, myriad similar-but-not-identical regulations could easily damage or shut down Internet platforms. [page 25]

So, "[t]o ensure the quintessentially interstate commerce of the Internet would be governed by a uniform national policy" of sparing platforms the need to monitor, Congress deliberately foreclosed the ability of state and local authorities to interfere with that policy with Section 230’s pre-emption provision. [page 10]. Without this provision, the statute would be useless:

Were every state and municipality free to adopt its own policy concerning when an Internet platform must assume duties in connection with content created by third party users, not only would compliance become oppressive, but the federal policy itself could quickly be undone. [page 13]

This pre-emption did not make the Internet a lawless place, however. Laws governing offline analogs to the services starting to flourish on the web would continue to apply; Section 230 simply prevented platforms from being held derivatively liable for user generated content that violated them. [page 9-10].

Notably, none of what Section 230 proposed was a controversial proposition:

When the bill was debated, no member from either the Republican or Democratic side could be found to speak against it. The debate time was therefore shared between Democratic and Republican supporters of the bill, a highly unusual procedure for significant legislation. [page 11]

It was popular because it advanced Congress’s overall policy to foster the most beneficial content online, and the least detrimental.

Section 230 by its terms applies to legal responsibility of any type, whether under civil or criminal state statutes and municipal ordinances. But the fact that the legislation was included in the CDA, concerned with offenses including criminal pornography, is a measure of how serious Congress was about immunizing Internet platforms from state and local laws. Internet platforms were to be spared responsibility for monitoring third-party content even in these egregious cases.

A bipartisan supermajority of Congress did not support this policy because they wished to give online commerce an advantage over offline businesses. Rather, it is the inherent nature of Internet commerce that caused Congress to choose purposefully to make third parties and not Internet platforms responsible for compliance with laws generally applicable to those third parties. Platform liability for user-generated content would rob the technology of its vast interstate and indeed global capability, which Congress decided to “embrace” and “welcome” not only because of its commercial potential but also “the opportunity for education and political discourse that it offers for all of us.” [page 11-12]

As the brief explains elsewhere, Congress’s legislative instincts appear to have been born out, and the Internet today is replete with valuable services and expression. [page 7-8]. Obviously not everything the Internet offers is necessarily beneficial, but the challenges the Internet’s success pose don’t negate the policy balance Congress struck. Section 230 has enabled those successes, and if we want its commercial and educational benefit to continue to accrue, we need to make sure that the statute’s critical protection remains available to all who depend on it to realize that potential.

Permalink | Comments | Email This Story

via Techdirt

Highlights From Former Rep. Chris Cox’s Amicus Brief Explaining The History And Policy Behind Section 230

Gauge Instruments Classic Watches

Gauge Instruments makes minimal analog watches inspired by precision instruments and vehicles. Their Classic Black and Classic White are prime examples of their approach. The watches have 42mm metal cases, a butterfly buckle and an anti-reflective sapphire crystal.

Amazon Web Services’ newest database, Amazon Neptune, is now generally available

After showing it off last year at AWS re:Invent 2017, Amazon Web Services announced Wednesday that its new cloud graph database, Amazon Neptune, is ready for the general public to take it for a spin.

Amazon Neptune fills a gap across the array of databases AWS customers can choose to run on its cloud, giving the company its own graph database product to offer next to open-source graph databases like JanusGraph and commercial products like Neo4j. AWS now offers six managed and generally available databases on its cloud service as well as a database migration service for moving hosted databases into the cloud.

Graph databases are designed for applications that need to quickly make sense of the associations between different groups of data. They allow users to store related bits of data as a graph that can be accessed in a single operation, rather than a bunch of individual queries for all that data.

Companies building social networks, fraud-detection apps or personalization features for existing apps, for example, can take advantage of graph databases to deliver more flexibility and speed. AWS rival Microsoft’s Azure Cosmos DB also offers graph database capabilities.

Amazon Neptune will replicate six copies of your data across three availability zones within AWS computing regions, and AWS said it was designed for 99.99 percent uptime. It is available in the US East (Northern Virginia), US East (Ohio), US West (Oregon), and EU (Ireland) regions, with more expected to arrive later this year.

via GeekWire

Amazon Web Services’ newest database, Amazon Neptune, is now generally available

Deploy MySQL on Oracle’s High-Performance Cloud (Step-by-step Guide)

Oracle Cloud Infrastructure (OCI) is Oracle’s second-generation cloud infrastructure. These new datacenters were built with the latest high-performance servers (Oracle’s X7 Servers) and were designed to eliminate network and CPU oversubscription. Due to high-performance systems and the multiple availability domains (ADs) in each region, these are the preferred environments for deploying MySQL. Since MySQL deploys on Compute services (IaaS), look for “Oracle Cloud Infrastructure Compute” (not Classic) on this region map.

Beyond the benefits of the second-generation datacenters, why deploy MySQL on Oracle’s cloud? Here’s a few of reasons people are choosing MySQL on OCI:

- No vendor lock-in: pay minimal or no egress charges and directly access your binary data files. Getting your data out of other clouds can be tedious and expensive.

- Consistency: use the same database on-premise as on-cloud. It’s the MySQL Enterprise Edition, the same version available from the website. Use the same monitor for both on-premise and cloud.

- Support: rely on database support from the team that develops MySQL

As a new platform, extra steps are required to install the MySQL Cloud Service. Otherwise, the install will default to OCI-Classic and you’ll miss the benefits of the second-generation datacenters.

If you don’t have an Oracle Cloud account, get started for free: https://docs.oracle.com/en/cloud/get-started/subscriptions-cloud/csgsg/sign-free-oracle-cloud-promotion.html

LOGGING IN AND SETTING UP YOUR ENVIRONMENT

Sign in to your Cloud Account (cloud.oracle.com) and go to My Services Dashboard. Click the Navigation menu icon navigation menu in the top left corner of the My Services Dashboard and then click Compute.

This will bring you to the OCI, Oracle Cloud Infrastructure, console.

- CREATE A COMPARTMENT

Create a compartment called “demo”.

- Click Identity. From left menu, choose Compartments and Create Compartment

- Name your compartment “Demo”.

2. CREATE A NETWORK

Then, create a virtual cloud network with three public subnets, which will span 3 Availability Domains (AD). Although it includes a built-in firewall to prevent intrusion, a more secure network should be used for production systems ( see VCNs and Subnets ).

- Click Networking, Virtual Cloud Networks

- Click Create Virtual Cloud Network

Complete the following fields:

- Create In Compartment: Demo

- IMPORTANT: Select option: Create Virtual Cloud Network plus related resources

- Click Create Virtual Cloud Network

3. ENABLE PLATFORM AS A SERVICE (PaaS) ACCESS

This next step enables the MySQL Cloud Service to access the underlying compute and storage resources.

- From left menu, select the dropdown for compartment list and select your root compartment.

- From top menu, click Identity, Policies and Create Policy.

- Name: I chose “MySQLPaaS access”

- Add four Policy Statements exactly like the following:

[ note: if you chose a different compartment name than demo, substitute accordingly in the following statements]

Allow service PSM to inspect vcns in compartment demo

Allow service PSM to use subnets in compartment demo

Allow service PSM to use vnics in compartment demo

Allow service PSM to manage security-lists in compartment demo

- Select Create

4. CREATE A BUCKET FOR OBJECT STORAGE

- Click Storage, Object Storage

- Switch compartment (bottom left menu) to “Demo”

- Chose Create Bucket

- BucketName: MySQLBackups

- Create Bucket

Next, create a swift password to authenticate to the object storage. This will be required when setting up MySQL.

- Click Identity, Users

- For your user, choose the elipses on right and select View User Details

- On left menu, choose “Auth Tokens”

- Generate Token Important: Write down this token. It’s unavailable after it’s created.

- While your here, write down the user name for which you’ve created this token. It should be something like: myadminaccount@email.com. You’ll need this later.

If you have issues, the above steps are well-documented here.

Before continuing to the next step, please write down the following from the current OCI console. You’ll need this info for the next steps.

- User name: Choose Identity, Users. This should be the user for whom you generated the Authentication Token in the previous step.

- Tenancy and region: Noted at top of screen

- Bucketname. Choose Storage, Object Storage. Note the name of the bucket that you created

5. DEPLOY THE MYSQL CLOUD SERVICE

First, navigate from OCI (Oracle Cloud Infrastructure) to the MyServices console. On the very top menu, click My Services. This should bring you back to the MyServices Dashboard.

To view the MySQL Cloud Service, either click Customer Dashboard” or click the navigation menu ( three parallel lines) in upper left corner.

Click MySQL and choose Open Service Console and then Create Instance.

The following screenshots show appropriate field entries. Once you select “Region”, additional fields will display:

Availability Domain: Select AD1

Subnet: This was setup in step #2.

Click Next and complete Service Details.

A full explanation of fields are available in the online documentation. However, Backup and Recovery Configuration requires further explanation.

Cloud Storage Container: The OCI format is https://swiftobjectstorage.<region>.oraclecloud.com/v1/<namespace>/<container>

For me, the region is us-ashburn-1, but this may vary depending on your location and your account. The namespace is the tenancy and it’s the name of your oracle account (hint: it’s in your current URL: https://psm-<tenancy>.console.oraclecloud.com). We named our container MySQLBackups. In my case, the URL is https://swiftobjectstorage.us-ashburn-1.oraclecloud.com/v1/015010/MySQLBackups. You will need to change the tenancy (015010) and possibly the AD (us-ashburn-1).

Username: This is the user for which we created a token when setting up OCI. It’s not the name for which you sign into your MyService Dashboard.

Password: This is the authentication token created in Step #4.

COMPLETION

Click Next and Create. After a few minutes, your MySQL instance will be running. This process has deployed MySQL onto an Oracle Compute instance (similar to EC2 instance) on the next generation datacenter. Once it’s created, identify the public IP address and ssh into the instance. Use ssh opc@xxxxxx to log into the instance. From here, you have full control of your instance.

NEXT STEPS

Of course, that’s a lot of steps to create a MySQL Cloud Service. Further automation is in development. In future blogs, I’ll include terraform automation instructions. Additionally, with multiple AD’s, we can set up replication for high availability.

Thanks for reading. I hope this was helpful.

via Planet MySQL

Deploy MySQL on Oracle’s High-Performance Cloud (Step-by-step Guide)

Visual Guide: What the Actual Trees of 52 Different Wood Species Look Like

If you’ve seen our excellent series on different species of wood, by looking at boards you can identify the ones most commonly used in furniture and homebuilding. But do you know what an actual Poplar, Walnut or Zebrawood tree looks like? Could you actually draw one if you were playing some forestry version of Pictionary?

Well, here’s a visual guide if you’re curious:

via Core77

Visual Guide: What the Actual Trees of 52 Different Wood Species Look Like

The Star Wars Canon Behind Solo’s Biggest Surprise

Solo: A Star Wars Story offers a shadier look into the period between Revenge of the Sith and A New Hope, removed from stories of the Rebellion vs. the Empire, and Jedi vs. Sith… mostly. But if you’ve only kept up with the Star Wars movies, and not the entirety of the multimedia behemoth that the franchise has become, one of Solo’s greatest surprises is likely also its most baffling. If you were confused, then here’s what you need to know.

Late into the events of Solo, Bantha poodoo has hit the fan in the most spectacular of manners for Han and his friends. Their attempt to deceive criminal overlord Dryden Vos out of the deal they’d cut with him goes sideways when Han’s would-be mentor Beckett double-crosses the group, and Dryden himself is killed in a scrap with Qi’ra and Han. As Han chases after Beckett, Qi’ra, although now seemingly free of the grip the Crimson Dawn crime syndicate has on her, chooses to return to the fold rather than running off with Han. She opens a communication channel to the Dawn’s high command—revealing the syndicate is run by a very familiar face:

Darth Maul himself. Yes, if you’ve only kept up with the movies, Maul was last seen getting lopped in half by a young Obi-Wan Kenobi during the climax of The Phantom Menace, seemingly plummeting to his death on Naboo. But Maul survived that not-so-fatal blow, and has actually been around for quite a while again in Star Wars canon, even before Disney purchased Lucasfilm. In fact, to know more about how Maul got from The Phantom Menace to Solo (and from half a Zabrak to a whole person again, more or less), we need to head into the world of Star Wars animation.

The Return of Maul

Maul’s re-emergence into Star Wars canon begins in the Clone Wars TV series’ fourth season, which introduced Maul’s brother, the exquisitely named Savage Opress. Savage and Maul (and their other brother, who I kid you not, is named Feral) grew up on the planet Dathomir, home to the fabled, witchy Force users known as the Nightsisters. While Maul was plucked from the world by Darth Sidious to become his apprentice, Savage was left behind—until the Clone Wars, when the dark assassin Asaaj Ventress (a former Nightsister herself) headed there after being betrayed by Count Dooku.

Ventress chose Savage as her apprentice in a quest for revenge against Dooku, but after even more betrayals—lots of betrayal among Dark Side users, as you’d expect—Savage struck out on his own, operating on information given to him by the leader of the Nightsisters, Mother Talzin, that Maul had survived his battle on Naboo and was living on the junkyard world of Lotho Minor.

That indeed proved to be the case—surviving his duel with Obi-Wan by feasting on the Dark Side energies of his own rage (at being chopped in half!), Maul managed to save his upper body from destruction and drag himself to a trash container, which was then dumped on Lotho Minor. In the years between his “death” on Naboo and Savage finding him—during which he cobbled himself a set of spider-y mechanical lower limbs—Maul’s mind was fractured, not just by his defeat but by Sidious’ abandonment of him. All that was left was a singular, all-encompassing thirst for vengeance against the Jedi and Obi-Wan, which Savage and Talzin were all too willing to stoke.

After bringing him back to Dathomir and using Nightsister magic to restore his mind (and replace Maul’s spidery-y legs with more humanoid ones), Savage found himself apprenticed to Maul in a quest to destroy Obi-Wan, one that eventually culminated in a duel between Maul, his hated enemy, and the Jedi Knight Adi Gallia. Gallia fell in battle, but Obi-Wan turned the tide on the Zabrak brothers, leading to their retreat. Maul immediately hatched more plans to get at Kenobi.

The Lord of Mandalore

Maul and Savage went big for their next grab at power, allying themselves with the Mandalorian Death Watch group’s Pre Vizla in their bid to overthrow the peaceful diplomatic rule of Mandalore’s current ruler, Satine Kryze (who was also once a potential paramour for Obi-Wan, before he had to distance his attachment to her). Using an army of Black Sun gangsters to bolster the Death Watch’s forces, the trio formed the Shadow Collective, and staged a coup attempt on Mandalore—but Vizla betrayed Maul (gasp!), trying to take rule of the planet for himself, leading to a duel between the two that culminated with Maul taking Vizla’s weapon, the legendary Mandalorian Darksaber, and beheading the Death Watch leader, taking his place as the current ruler of Mandalore.

Using Satine as a hostage to draw Obi-Wan out alone, Maul enacted a fraction of his vengeance against the Jedi by killing Satine in front of him—but his victory over Obi-Wan was short-lived. The Death Watch forces that refused to pledge loyalty to him after Vizla’s death freed Obi-Wan from prison, but worse, Maul’s re-emergence on such a major stage attracted the attention of a far bigger threat: his former master, Darth Sidious.

Sidious came to Mandalore to ensure that the Sith’s rule of two—currently taking the form of Sidious and Dooku—stayed true, dueling both Maul and Savage and ultimately killing the latter. Maul was kept alive by Sidious, however, who planned to use him to draw out Talzin, so he could eliminate the last of any meddlesome threats standing in the way of his imminent ascendance to rule over the entire galaxy.

The Rise of the Empire and Solo

Although Clone Wars came to an end before Sidious’ plans for Maul could be shown on screen, the gaps were filled in by both the Darth Maul: Son of Dathomir comic (one of the last Star Wars comics published by Dark Horse before Disney’s purchase of Lucasfilm lead to the rights transferring over to Marvel, and adapted from a scrapped Clone Wars storyline) and the Ahsoka novel. The former sees Sidious successfully eradicate Talzin and the Nightsisters, leading to Maul fleeing once again back to his stronghold on Mandalore—only for the latter to see Ahsoka Tano joining Republic forces in the final days of the Clone Wars to help liberate the planet from Maul and his Shadow Collective, although Maul escaped.

It is after these two stories that Maul’s Solo appearance lies. Solo is largely set in the earlier half of the 20-year gap between Revenge of the Sith and A New Hope, making Maul’s move back into the world of organized crime in the Crimson Dawn (itself based on Dathomir, according to his call with Qi’ra in the movie, so Maul is back on his homeworld) an unsurprising leap, given his history of working with gangsters and criminals during the formation of the Shadow Collective. Although we don’t know for sure, perhaps the Dawn is made up of what was left of Maul’s loyal forces from the Collective, which would explain why Maul is its overarching leader. Now that Maul has returned to the cinematic portion of the Star Wars universe, it’s likely that these gaps will be filled in through comics and books, giving us the exact details of how the Dawn and Maul rose to prominence in the galactic underworld.

But Maul’s story doesn’t end with Solo. However, we’ve already seen its end, which happens years after the event of the movie, in the recently-concluded Star Wars Rebels TV series.

The Final End of Darth Maul

Maul returned to TV screens during the climax of Rebels’ second season, set just a handful of years before the events of A New Hope. Fledgling Jedi-in-training Ezra Bridger encountered him on the planet Malachor, as the former Sith searched for Dark Side artifacts he could use to bring down Sidious and his Empire. It was suggested he’d been there for some time, implying that either Maul had left the Crimson Dawn in the intervening years or that the crime syndicate had become severely diminished.

Maul being Maul, he betrayed Ezra and fled Malachor, only to capture Ezra and his Rebel friends several months later in an attempt to gain access to an old Jedi Holocron saved by Ezra’s master, Kanan. Combining the Holocron with a Sith one he recovered on Malachor, Maul is granted a vision that confirms that his hated foe Obi-Wan is alive and hiding on a planet with twin suns, leading to a final conflict that, for Maul, had been decades in the making.

After locating Obi-Wan on Tatooine, however, Maul’s quest for vengeance came to an unexpected end… for him. After Maul had figured out his exile on the desert world was not to avoid the eye of the Empire, but to protect someone capable of bringing it down, the aged Jedi cut down Maul for good. Although unsuccessful in his own quest to kill Obi-Wan, Maul’s dying words were to ask if Kenobi’s charge was the fated chosen one. Kenobi replied in the affirmative and Maul died knowing at least someone would get the vengeance against Darth Sidious that he had so desperately craved.

Although we’ve seen a great deal, Solo proves there is still so much about the life and times of Maul—previously nothing more than a cool-looking henchman who appeared in a single movie—left to explore. A lot of the gaps have already been filled in thanks to Clone Wars and Rebels, but his surprising return in the latest Star Wars film means there’s still a lot more we have to learn about the former Dark Lord of the Sith.

via Gizmodo

The Star Wars Canon Behind Solo’s Biggest Surprise

The first impression of MySQL 8 system variable innodb_dedicated_server

We manage several hundreds of MySQL servers, We carefully benchmark and build custom database infrastructure operations for performance, scalability, availability and reliability … But What if we have provision for auto sizing of MySQL system variables innodb_buffer_pool_size, innodb_log_file_sizeand innodb_flush_method ? Actually, These are top 3 system variables we consider tuning for MySQL performance and when we first read about this feature, we got super excited so did some research and decided to write this post:

What was our first reaction, when we first read about innodb_dedicated_server ?

Wow, That will be awesome … Indeed, When we manage several hundreds of MySQL instances, This feature will really improve efficiency and DBA Ops. governance.

Now, Let us explain what we have found:

How does innodb_dedicated_server system variable in MySQL 8.0 size the following variables:

-

innodb_buffer_pool_size:

- <1G – 128M (default value if innodb_dedicated_server is disabled / OFF)

- <=4G = Detected Physical RAM * 0.5

- >4G : Detected Physical RAM *0.75

-

innodb_log_file_size:

- <1G: 48M(default value if innodb_dedicated_server is OFF)

- <=4G: 128M

- <=8G: 512M

- <=16G: 1024M

- >16G: 2G

-

innodb_flush_method

- Set to O_DIRECT_NO_FSYNC if the setting is available on the system. If not, set it to the default InnoDB flush method

The first impression of innodb_dedicated_server system variable in MySQL 8.0 is impressive, Definitely will deliver much better performance than default value. This new feature will configure the MySQL system variable mentioned above more intuitively to improve DBA productivity. Till MySQL 5.7 it was always presumed 512M RAM with the default settings.

Are we going to follow this in our daily DBA checklists ?

Not really, We are an very conservative team about implementing the new features immediately in the critical database infrastructure of our customers, Also we are afraid about the isolated issues due to auto sizing of MySQL / InnoDB memory structures, Let’s explain why we will not be using this feature immediately for our MySQL 8.0 customers:

- We carefully size InnoDB memory parameters on various factors like database size, transaction complexity, archiving policies etc. So we want to be hands-on or follow manual sizing of system variables innodb_buffer_pool_size, innodb_log_file_size and innodb_flush_method.

- Capacity planning and sizing – We are always afraid of over / undersizing of our database infrastructure operations. Database infrastructure operations reliability is very critical for us, We have dedicated team with-in to monitor and trend database infrastructure operations and system resource usage consumption.

P.S – innodb_dedicated_server system variable is a relatively new feature, We are confident MySQL engineering team will be improving this component in coming days so our perspective will also change, We will never forget then to blog about this feature and why we are seriously thinking about implementing it for our customer production infrastructure.. Technology keeps changing for good, We are adaptive for the change !

The post The first impression of MySQL 8 system variable innodb_dedicated_server appeared first on MySQL Consulting, Support and Remote DBA Services By MinervaDB.

via Planet MySQL

The first impression of MySQL 8 system variable innodb_dedicated_server

Make elotes, Mexican corn-on-the-cob and summer’s perfect dish

It wasn’t the most authentic of settings, but my maiden voyage to the land of Mexican elotes took place via a Chicago White Sox game. Two thoughts stuck: “These greedy bastards are charging $6 for a small tray of corn?!” More consequentially: “Boy, sweet corn with mayo + butter + cheese + lime + chili is mighty delicious.”

Like baseball, fireflies, and small-town parades, elotes are a harbinger of summer, a simple but impressive and indulgent showcase for sweet corn. It’s an all-dressed-up version of corn-on-the-cob, where a helpful slather of mayonnaise and butter helps cheese and chili powder adhere to the kernels. The overpriced ballpark elotes left such an impression, the next day I sought out a more genuine version in Chicago.

Advertisement

I found it in the city’s Little Village neighborhood, the residential and economic heart of Chicago’s Mexican populace. In a city where regulation and red tape all but suppresses street food culture, the only carts I encountered were for elotes and tamales. At several of these elotes stands, the ritual was the same: The vendor would remove a corn-on-the-cob from a steaming cooler. Holding the cob upright by its stick, she would slice vertically, the kernels landing onto a plastic mat. She would fold the plastic mat in half and dump its contents into a styrofoam cup. The vendor would then juice a lime over the corn, scrape off a spatula’s worth of mayonnaise against the cup, squeeze on imitation butter, and spoon the feta-like cotija cheese and chili powder on top. This set me back $2.50, though the norm is to hand over three singles and tell her to keep it. (Purists will argue elotes is served on-the-cob, while esquites is off-the-cob and pan-fried. But elotes has become the catch-all word for the dish, and the term we’ll use here.)

Whether served on the cob or off, this marriage of sweet, fat, spice, citrus, and salty cheese is dangerous and enticing, a combination sounding more like a misprint than carefully considered. The undisputed best way to enjoy sweet corn is grilled with a pat of butter, so elotes might be seen as a next-level application, a justified gilding of the lily.

As with all dishes, but especially with elotes, achieving balance is key. While you could easily cut corn and fold in the mayonnaise, cheese, and spices (and making it easier to eat), there’s just something tactilely and visually satisfying about serving elotes on the cob (plus, it discourages you from adding too much mayonnaise, as a light slather on the cob suffices). The recipe below comes via chef Andres Padilla of Chicago’s Topolobampo, founded by Rick Bayless and recently awarded outstanding restaurant, the top prize of the James Beard Foundation.

Advertisement

In this recipe, Mexican crema (or sour cream) is employed, though you can substitute the more common mayonnaise. Boiled corn will also do, but please consider taking the extra step and grill whole cobs over charcoal. It doesn’t even compare.

Elote asado (charcoal-grilled corn with cream, cheese and chile)

Serves six; recipe courtesy Topolobampo in Chicago

- 6 ears fresh sweet corn, in their husks

- 3 Tbsp. unsalted butter, melted

- 1/2 cup thick cream or commercial sour cream mixed with a little milk or cream

- 1/3 cup crumbled Mexican queso anejo or queso fresco, or cheese like Parmesan, feta, cotija, or farmer’s cheese

- 1 Tbsp. hot powdered chile (ground chile de arbol, guajillo, or New Mexico chile)

- Limes

1. About an hour before serving, place the ears of corn in a deep bowl, cover with cold water and weight with a plate to keep them submerged. Light your charcoal fire and let it burn until the bed of coals is medium-hot; adjust the grill four inches above the fire.

Advertisement

2. Lay the corn on the grill and roast for 15 to 20 minutes, turning frequently, until the outer leaves are blackened. Remove, let cool several minutes, then remove the husks and silk. About 10 minutes before serving, brush the corn with melted butter, return to the grill and turn frequently until nicely browned. Serve right away, passing the cream, cheese, and powdered chile for your guests to use to their own liking. Serve with a wedge of lime.

Bonus variation: Esquites (as served in Toluca and Mexico City)

Cut the kernels from six cobs, then fry in three tablespoons lard, vegetable oil, or butter, with hot green chile to taste (seeded and sliced) and two or three tablespoons chopped epazote. Season with salt.

via Lifehacker

Make elotes, Mexican corn-on-the-cob and summer’s perfect dish

The Right Way to Kill Weeds

Getting rid of weeds is a pain. Pluck them, and they come back. Kill them with chemicals, and the ground is poisoned for other plants. These organic corn farmers demonstrate a much more reliable, chemical-free, and downright spectacular method to clear the ground – FIRE!