As MySQL HeatWave environments continue to grow, many organizations prioritize scaling compute and storage resources while overlooking a critical area: database housekeeping. Inadequate maintenance practices can result in excessive storage consumption, longer backup and recovery times, replication lag, degraded query performance, and increased operational costs. This blog highlights key database hyPlanet for the MySQL Community

MySQL Data at Rest: Architecture & Best Practices

https://cdn.prod.website-files.com/6717800cb1e973b8fc433b03/6a0c9cfd1d34e7ec60607e8f_MySQL%20Data%20at%20Rest%20Architecture%20%26%20Best%20Practices.avif

Securing MySQL Data at Rest: Architecture, Concepts, and Why It Matters

In today’s data-driven environments, protecting sensitive information is no longer optional, it is foundational. While organizations invest heavily in access controls and network security, one critical area is often misunderstood: data-at-rest encryption in MySQL.

Data-at-rest encryption ensures that even if database files are accessed outside MySQL through disk theft, backup exposure, or filesystem compromise, the data remains unreadable. This post explains how MySQL implements encryption internally, what it protects, and why a well-designed encryption strategy is necessary for modern systems.

Understanding the Real-World Threat Model

Before configuring encryption, you must understand the exact risks it mitigates. Data-at-rest encryption in MySQL protects against scenarios such as:

However, encryption operates exclusively at the storage layer. It does not protect against:

- Valid database users executing queries.

- Application-level vulnerabilities.

- Compromised database credentials.

How MySQL Implements Data-at-Rest Encryption

MySQL utilizes InnoDB storage engine encryption (External Link), which is designed to be transparent to applications and highly efficient at runtime.

Key Characteristics:

- Encryption is applied at the tablespace level.

- Data is encrypted at the page level.

- All encryption and decryption operations occur entirely in memory (within the InnoDB buffer pool).

- Plaintext data is never written to the physical disk.

This design ensures that your applications require absolutely no code changes, maintaining strong protection at the storage level without sacrificing usability.

Encryption Architecture: A Layered Approach

MySQL relies on a hierarchical key structure to manage encryption securely.

Key Hierarchy

- Master Key: Stored securely in the MySQL keyring.

- Tablespace Key: A unique key generated for each individual tablespace.

- Encrypted Data Pages: The actual user data stored on disk.

How It Works:

Each tablespace is encrypted using its own unique tablespace key. To secure the system further, the tablespace key is then encrypted using the master key. Finally, the master key is stored in the keyring. This layered approach improves overall security and allows administrators to rotate keys efficiently without needing to re-encrypt entire tablespaces.

What Should Be Encrypted in MySQL

A complete encryption strategy involves much more than just securing your tables.

To prevent data leaks, the following components must be considered:

- Tablespaces: These hold the actual user data and are the primary target for encryption.

- Undo Tablespaces: Undo logs store previous versions of rows, which may include deleted or modified data. Encrypting them stops the exposure of historical data.

- Redo Logs: Redo logs capture recent database changes and transaction history. They often contain highly sensitive before-and-after values.

- Binary Logs: Binary logs track all database modifications. They are essential for MySQL replication (Internal Link placeholder) and point-in-time recovery.

- Relay Logs: Existing on replica servers, relay logs mirror the primary server’s binary logs, making them equally sensitive.

Encrypting only your tablespaces leaves these other layers heavily exposed. Full coverage guarantees your data is protected across all storage components.

Key Management: The Core of Encryption

Encryption is only as strong as its key management. The MySQL keyring carries several vital responsibilities:

- Storing the master encryption key.

- Enabling the encryption and decryption of tablespace keys.

- Supporting secure key rotation.

Example Configuration (MySQL 8.0)

early-plugin-load=keyring_file.so

keyring_file_data=/secure/keyring/keyringVerification

SHOW PLUGINS;Expected output:

keyring_file | ACTIVEBest Practices for Keyring Management:

- Store keyring files with strictly restricted OS permissions.

- Keep your keyring storage logically and physically separate from your database files.

- Always include the keyring in your database backup strategy (Internal Link placeholder).

MySQL 8.0 vs MySQL 8.4: Evolution of the Keyring

MySQL 8.4 introduces a modern, component-based keyring system, shifting away from the older plugin methods.

- MySQL 8.0: Utilizes a plugin-based approach, which requires the plugin to be loaded early during server startup (early-plugin-load).

- MySQL 8.4: Utilizes a component-based architecture, offering better flexibility, easier configuration, and long-term maintainability.

Verification in MySQL 8.4

SELECT * FROM performance_schema.keyring_component_status;Why Data-at-Rest Encryption Is Essential

Implementing encryption extends beyond basic security. It actively supports:

- Regulatory Compliance: Meeting strict standards for PCI-DSS, GDPR, and HIPAA.

- Accidental Exposure Prevention: Stopping data leaks from misplaced backups or retired hardware.

- Defense Against Infrastructure Threats: Adding a final layer of protection against hardware and OS-level breaches.

Modern infrastructure planning must assume that storage layers could eventually be accessed by unauthorized parties.

Conclusion

Data-at-rest encryption in MySQL provides a reliable method to defend sensitive data from unauthorized file-level access. However, its actual effectiveness relies on a complete approach. You must encrypt all relevant database components, manage your encryption keys safely, and clearly understand the scope and limitations of the feature. When set up correctly, data-at-rest encryption acts as a necessary layer in a defense-in-depth strategy.

Data-at-rest encryption in MySQL is a foundational defense-in-depth strategy that protects sensitive information from physical disk theft, backup exposure, and unauthorized filesystem access by ensuring plaintext data is never written to disk. Utilizing InnoDB’s transparent, layered architecture, it secures data via a master key stored in a keyring—which has evolved from a plugin system in MySQL 8.0 to a modern component-based model in MySQL 8.4—to safely encrypt individual tablespace keys.

To achieve true regulatory compliance (like GDPR or HIPAA) and robust security, organizations must extend this encryption beyond standard tablespaces to include undo logs, redo logs, binary logs, and relay logs, ensuring complete data protection backed by strict, isolated key management practices.

Secure Your MySQL Infrastructure

Ensure your database meets strict compliance standards and is fully protected against file-level threats. Our experts at Mydbops are ready to audit, configure, and manage your MySQL encryption strategy.

Planet for the MySQL Community

Implementing MySQL Data-at-Rest Encryption: Step-by-Step Guide

https://cdn.prod.website-files.com/6717800cb1e973b8fc433b03/6a0bf6640fd479be346bd089_Implementing%20MySQL%20Data-at-Rest%20Encryption.avif

Implementing MySQL Data-at-Rest Encryption

Implementing encryption in MySQL requires careful planning, correct configuration, and operational discipline. While the feature itself is straightforward, a production deployment involves multiple components such as keyring setup, table encryption, log encryption, backups, and performance validation.

This guide provides a detailed, step-by-step approach to configuring MySQL data-at-rest encryption in a production environment, focusing on the latest standards in MySQL 8.4.

Phase 1: Setting Up the Keyring (MySQL 8.4 Component-Based Approach)

In MySQL 8.4, the keyring architecture transitioned from a plugin-based model to a component-based framework. This update improves flexibility, maintainability, and compatibility with modern MySQL infrastructure.

Unlike MySQL 8.0, where early-plugin-load was necessary, MySQL 8.4 uses a component manifest and configuration file. The keyring initializes automatically during server startup.

1. Understanding the Keyring Components

The keyring setup in MySQL 8.4 involves four main elements:

- Component: component_keyring_file

- Configuration file: component_keyring_file.cnf

- Data file: component_keyring_datafile

- Manifest file: mysqld.my

These elements work together to ensure the keyring loads before InnoDB initializes.

MySQL 8.4 Keyring Component Initialization Flow

Decoupled startup mapping sequence matching strict system initialization constraints.

Architecture Standard: Fully Symmetrical Pathing

2. Create Keyring Configuration File

First, create the configuration file to define where the database will store the keyring data.

vi /etc/mysql/component_keyring_file.cnfAdd the following configuration:

{

"path": "/secure/keyring/component_keyring_datafile"

}

3. Create and Secure the Keyring Directory

Create the directory and assign the correct ownership and permissions. This prevents unauthorized OS-level access.

mkdir -p /secure/keyring

chown -R mysql:mysql /secure/keyring

chmod 700 /secure/keyring

4. Create the Manifest File

The manifest file instructs the server to load the keyring component at startup.

vi /var/lib/mysql/mysqld.myAdd the following content:

{

"components": "file://component_keyring_file"

}

5. Restart MySQL Service

Apply the changes by restarting the MySQL service.

systemctl restart mysqld

6. Verify Keyring Component Status

Run the following query to confirm the keyring is active.

SELECT * FROM performance_schema.keyring_component_status;Expected Output:

component_keyring_file | ACTIVE | YESThis output confirms the keyring component is operational.

Phase 2: Enabling Tablespace Encryption

With the keyring in place, you can now encrypt your data. Tablespace encryption protects your tables at the storage level.

Encrypt New Tables

Specify the encryption clause when creating a new table.

CREATE TABLE customers (

id INT PRIMARY KEY,

name VARCHAR(100)

) ENCRYPTION='Y';Encrypt Existing Tables

Alter existing tables to enable encryption.

ALTER TABLE customers ENCRYPTION='Y';Set Global Default

To force encryption for all new tables automatically, adjust the global variable.

default_table_encryption=ONVerify Encryption

Check the encryption status of your tables using the information schema.

SELECT NAME, ENCRYPTION FROM information_schema.INNODB_TABLESPACES;Interactive Data Protection Matrix

Simulate your production encryption profile by toggling settings. Ensure complete log coverage to reach 100% compliance.

🔗 Fulfilled Tablespace Encryption (TDE) in Percona Cluster For teams running Percona XtraDB Cluster who need to apply TDE across nodes.

🔗 Shield Your Sensitive Data: MySQL Data Encryption at Rest A webinar recap covering encryption at rest concepts — good supplementary read for teams new to the topic.

Phase 3: Encrypting Logs

To achieve complete data-at-rest protection, you must also encrypt the database logs. Unencrypted logs can expose sensitive data even if the tablespaces are secure.

Redo Log Encryption

innodb_redo_log_encrypt=ONUndo Log Encryption

innodb_undo_log_encrypt=ONBinary Log Encryption

binlog_encryption=ON🔗 How to Use the MySQL 8.4 Audit Log Filter Key rotation and encryption events should be captured in audit logs — this guide explains how to set up granular audit filtering in MySQL 8.4.

Phase 4: Master Key Rotation

Periodic key rotation is a standard security requirement. Rotating the master key generates a new key and re-encrypts the tablespace keys, but it does not require re-encrypting the actual table data.

ALTER INSTANCE ROTATE INNODB MASTER KEY;InnoDB Master Key Rotation Workflow

Watch the pipeline phases below to see metadata execution processes.

1. Generate New Master Key

Executing ALTER INSTANCE ROTATE INNODB MASTER KEY signals the active Keyring Component to securely generate a fresh, highly random Master Encryption Key (MEK) internally within its memory boundaries.

Backup and Recovery Considerations

Encryption directly affects how you handle backups and recovery. Keep these principles in mind:

- Physical backups must include the keyring.

- Losing the keyring makes encrypted data permanently unrecoverable.

- Logical backups (like mysqldump) export data in plaintext and are not encrypted by default.

Example Keyring Backup Command:

cp /secure/keyring/keyring /backup/keyring

Performance Impact and Benchmarking

Encryption adds a measurable workload to your database server. Before deploying to production, benchmark the performance impact in a staging environment.

Common observations include:

- Query latency increases moderately.

- Commit latency increases slightly due to redo log encryption.

- Overall CPU and I/O usage will rise.

Example Metrics:

- Average query latency: 12.3 ms → 14.7 ms

- Commit latency: 1.2 ms → 1.8 ms

Encryption Overhead Benchmarks

Toggle workload patterns to view simulated AES-256 performance impact.

Average Query Latency

12.3 ms vs 14.7 ms (+19.5%)

Transaction Commit Latency

1.2 ms vs 1.8 ms (+50.0%)

Overall CPU Encryption Overhead

Baseline vs Active (+8.2%)

Operational Best Practices

To maintain a secure and stable environment, follow these operational rules:

- Restrict OS-level access: Limit access to the database data directory and the keyring folder.

- Enforce minimal privileges: Apply the principle of least privilege for all database users.

- Align replication nodes: Ensure encryption configurations are identical across all primary and replica nodes.

- Monitor status regularly: Track the state of your keyring and encryption variables as part of your routine checks.

🔗 Fastest Parallel Replication in MySQL 8For teams aligning encryption settings across replication nodes, this explains replication internals relevant to that process.

Common Pitfalls to Avoid

Many encryption issues surface only during a critical incident. Avoid these frequent mistakes:

- Failing to back up the keyring file.

- Skipping routine recovery tests.

- Forgetting to enable binary log encryption.

- Deploying to production without proper performance benchmarking.

These oversights often lead to costly delays or permanent data loss during a recovery scenario.

Conclusion

Implementing MySQL data-at-rest encryption is a structured process that extends beyond simple configuration. It requires strict attention to key management, operational workflows, and performance validation.

A well-planned execution ensures that your data remains completely secure, even in the event of a physical or storage-level compromise. For additional context on official specifications, consult the MySQL 8.4 Reference Manual on InnoDB Data Encryption.

Protecting your data at rest is just one part of a robust database strategy. Mydbops provides comprehensive MySQL support, ranging from 24/7 managed services and remote DBA assistance to architectural consulting and thorough security audits. Let our team handle the operational complexities and performance tuning to eliminate bottlenecks and keep your infrastructure secure.

Reach out today to secure and optimize your database environment.

Planet for the MySQL Community

Amazon Aurora MySQL 8.4 is now generally available

Amazon Aurora MySQL-Compatible Edition now supports MySQL 8.4, a community MySQL Long Term Support (LTS) major version. Aurora MySQL 8.4 launches with compatibility for community MySQL 8.4.7 and introduces aligned version numbering, so the version number you run on Aurora matches the community MySQL version it is compatible with. Aurora also manages the underlying patch on your behalf, simplifying day-to-day operations. Aurora MySQL now targets major versions within 12 months of community MySQL LTS releases, minor versions within 3 months of each community minor, and an Aurora LTS minor within 12 months of each major. For engine specific release objectives, see the Aurora and RDS open source release calendar announcement.

Aurora MySQL 8.4 strengthens security defaults for new clusters. TLS is enforced by default with only TLS 1.2 and 1.3 supported, new accounts use the caching_sha2_password authentication plugin, and password validation policies are customizable through DB cluster parameter groups. Automated upgrade prechecks identify compatibility issues before your cluster goes offline, giving you confidence before you upgrade. To learn more about the Aurora MySQL 8.4 customer experience, refer to the Aurora MySQL 8.4 launch announcement blog.

You can upgrade your database using Amazon RDS Blue/Green Deployments, in-place upgrade, or restore from a snapshot. Learn more about performing major version upgrades in the Amazon Aurora User Guide. You can also migrate to Aurora MySQL 8.4 from external MySQL sources using AWS Database Migration Service or Percona XtraBackup. Aurora MySQL 8.4 is available in all AWS Regions where Aurora MySQL is available.

Amazon Aurora MySQL is designed for unparalleled high performance and availability at global scale with full MySQL compatibility. It provides scale-to-zero serverless compute, Aurora Global Database for Multi-Region resilience, Aurora I/O-Optimized for improved price performance on I/O-intensive workloads, and built-in security and continuous backups. To get started with Amazon Aurora, take a look at our getting started page.

Planet for the MySQL Community

Listen to this trucker explain how “foreign” drivers are being sidelined just days after SCOTUS said companies are liable for them

https://media.notthebee.com/articles/6a08f3a813b946a08f3a813b95.jpg

Some good news for Americans planning a summer road trip:

Not the Bee

An Entire Wikipedia That’s 100% AI Hallucinations

"Every link leads to an entry that does not exist yet," explains the GitHub page for a Wikipedia-like site called Halupedia. "Until you click it, at which point an LLM pretends it has always existed and writes it for you, in the deadpan register of a 19th-century scholarly press…"

Every article is invented on demand. The footnotes are also lies… The hardest problem with an infinite, on-demand encyclopedia is internal contradiction… When the LLM writes an article, it is required to add a context="…" attribute on every <a> it inserts, summarising the future article it is linking to (e.g. context="19th-century clerk who formalized footnote drift, Pellbrick’s mentor")… When that target article is later requested for the first time, the worker loads the accumulated hints and injects them into the system prompt as "PRIOR REFERENCES — these are CANON". The LLM is instructed that the encyclopedia is hallucinated and absurd, but it must not contradict itself. Fast Company reports that Halupedia was created by software developer BartÅomiej Strama, who confessed in a Reddit comment that the site came about after a drunk night with a friend. In the week since launch, he says Halupedia has amassed more than 150,000 users."

Beyond indulging in silly alternate histories, what’s the point of using Halupedia? Strama hinted at one larger purpose in a reply to a donor on his Buy Me a Coffee page: "Your contribution towards polluting LLM training data will surely benefit society!" he wrote.

The site is licensed as free software under the GPL-3.0 license. Thanks to long-time Slashdot reader schwit1 for sharing the news.

Read more of this story at Slashdot.

Slashdot

“Landmark” new study claims significant link between vaccines and autism. Details here.

https://media.notthebee.com/articles/6a05c6aae56926a05c6aae5693.jpg

Y’all know that the autism-vaccine debate is one of the most live-wire, hot-button issues right now. People come to blows over it, figuratively and probably sometimes literally. It’s a fraught question.

Not the Bee

Upgrade strategies for Amazon RDS for MySQL 8.0 to 8.4

https://d2908q01vomqb2.cloudfront.net/887309d048beef83ad3eabf2a79a64a389ab1c9f/2026/05/14/DB5369.png

Amazon Relational Database Service (Amazon RDS) for MySQL 8.0 is planned to reach end of standard support on July 31, 2026. To keep your databases up to date with bug fixes, security enhancements and the latest features, upgrade to MySQL version 8.4 before the current version reaches end of standard support. This is a major engine version upgrade.

When a new version is released for RDS for MySQL, you can choose how and when to upgrade your DB instances. However, major engine version upgrades can be complex and risky, introducing changes that might not work with existing applications and raising concerns about downtime and compatibility.

This post is part of a two-part series on upgrading RDS for MySQL 8.0 to 8.4. Here, we cover the end of standard support timeline, extended support costs, upgrade methods, and key best practices. For a step-by-step implementation guide, see Best practices for upgrading RDS for MySQL 8.0 to 8.4 with prechecks, Blue/Green, and rollback.

RDS for MySQL 8.0 end of standard support timeline

It is important to note that individual minor versions within MySQL 8.0 have their own end of support dates that might occur before the July 31, 2026 deadline. For example, minor version 8.0.28 reached its end of standard support on March 28, 2024. Always verify the support status of your specific minor version to avoid running on an unsupported version before the major version deadline. See the RDS for MySQL release calendar to stay up to date on end of standard support dates for various minor versions within a major version.

You can use the describe-db-engine-versions AWS Command Line Interface (AWS CLI) command to check available versions and their upgrade paths:

To check the available upgrade paths for your specific current version, run:

Excerpt from output:

This command helps you identify valid upgrade targets from your current version, so you can proactively plan your upgrade before end-of-support deadlines approach.

Amazon RDS Extended Support

In September 2023, AWS announced Amazon RDS Extended Support, a paid offering that provides critical security and bug fixes for Amazon Aurora MySQL or Amazon RDS for MySQL major versions for up to three years after the end of standard support date. If you plan to upgrade at a later date, extended support gives you additional time to plan, test and execute major version upgrades without being rushed into an emergency migration.

Extended Support provides flexibility to upgrade on your timeline while maintaining full AWS support for security patches and critical bug fixes. It activates automatically after a major version’s end of standard support date and continues until you upgrade to a supported version, ensuring your databases remain secure and stable during your transition period. Extended Support charges are calculated on a per vCPU per hour basis. See Hardware specifications for DB instance classes to check the vCPUs corresponding to your DB instance class. The charges vary by AWS Region, with pricing structured based on how long a major version remains past its end of standard support date.

For a Multi-AZ deployment, both the primary and the standby instance are billed independently, because each runs the same instance class and carries the same vCPU count. This effectively doubles your Extended Support cost compared to a Single-AZ deployment. See the following example cost table for us-east-1 Region.

| Deployment Type | Instance class | vCPUs count | Year 1-2 | Year 3 |

| Single-AZ (SAZ) | db.r5.xlarge | 4 | $292/month | $584/month |

| Multi-AZ (MAZ) | db.r5.xlarge | 8 | $576/month | $1,152/month |

| MAZ primary with a SAZ read replica | db.r5.xlarge | 12 | $864/month | $1,728/month |

| MAZ primary with a MAZ read replica | db.r5.xlarge | 16 | $1,168/ month | $2,336/month |

For accurate, Region-specific pricing, refer to Amazon RDS Extended Support costs in RDS for MySQL pricing. You can also use the AWS Pricing Calculator to model your specific fleet.

Key changes in MySQL 8.4

As with any major version upgrade, there are important changes you should be aware of before upgrading so you can test your database and applications accordingly. MySQL 8.4 introduces meaningful behavioral and configuration changes from 8.0 that require thorough validation before migration. We call out a few important changes in this post. Refer to Changes in MySQL 8.4 as your primary reference for the complete list of changes.

In MySQL 8.0 (on RDS), the default authentication plugin was mysql_native_password. In MySQL 8.4, mysql_native_password is disabled by default, and caching_sha2_password is now the default authentication plugin for RDS for MySQL 8.4. For more information, see MySQL default authentication plugin. You can audit your database users to identify any accounts still using mysql_native_password and update them as needed. Verify that your application drivers and connectors support caching_sha2_password.

MySQL 8.4 has also fully transitioned to SOURCE/REPLICA terminology, replacing the legacy MASTER and SLAVE keywords. As a result, review and update any scripts, automation, or documentation that references the old terminology. In addition, there is an updated list of reserved keywords. See MySQL 8.4 Keywords and Reserved Words for the complete list. If any reserved keywords are present in your workload, they will also be flagged in the upgrade precheck log file discussed in the following section.

Lastly, MySQL 8.4 changed the default values for several InnoDB server system variables. These changes can impact performance and behavior, especially if you are relying on the previous defaults. For example, innodb_adaptive_hash_index changed from ON (MySQL 8.0 default) to OFF (MySQL 8.4 default). For a complete list, see MySQL 8.4 Changed Server Defaults. If you have custom parameter group values set for any of these parameters, evaluate whether those are compatible with the new default settings.

For a comprehensive view of all features added, changed, and deprecated in MySQL 8.4 compared to MySQL 8.0, refer to What Is New in MySQL 8.4 since MySQL 8.0.

Upgrade prechecks

When you initiate an upgrade from RDS for MySQL 8.0 to 8.4 from the AWS Management Console or AWS Command Line Interface (AWS CLI), RDS runs mandatory prechecks automatically in the background to detect any incompatibilities. These prechecks run before the DB instance is stopped for the upgrade, with no downtime during the validation phase.

If prechecks detect incompatibilities, RDS automatically cancels the upgrade and generates an event notification. Your original MySQL 8.0 instance remains unchanged with no downtime. To identify the specific incompatibilities that blocked the upgrade, review the PrePatchCompatibility.log file available in the Logs and events section of the Amazon RDS console. This log provides detailed information about each incompatibility, allowing you to address the issues before reattempting the upgrade. You can also retrieve the log file using the AWS CLI with the describe-db-log-files and download-db-log-file-portion commands.

As a best practice, test the upgrade process before upgrading in production. You can either use snapshot restore or RDS Blue/ Green Deployments to test the upgrade. Alternatively, you can also use RDS read replica and perform the upgrade first on the replica instance to test for changes and then upgrade the primary. Performing these tests not only provides you with upgrade incompatibilities (if any) using the RDS prechecks log file, but also provides you with an estimate of how long it takes for the prechecks to run and the complete the upgrade. The duration of the upgrade varies depending on the workload and number of database objects. Lastly, these prechecks identify incompatibilities in the database objects, such as reserved words in a procedure definition. They do not validate any application-side logic. Therefore, verify how any reserved keywords or unsupported syntax can impact your application. For detailed information regarding all RDS prechecks, refer to Best practices for upgrading Amazon RDS for MySQL 8.0 to 8.4 with prechecks, Blue/Green, and rollback.

You can also review any incompatibilities by running the MySQL community upgrade checker utility.

Available version upgrade choices

In this section, we cover the available upgrade options in order of complexity and downtime impact. Upgrading an RDS instance requires downtime for the duration of the upgrade.

- In-place upgrade

- Amazon RDS blue/green deployment

- Snapshot restore

In-place upgrade

The in-place upgrade is the most straightforward path. RDS upgrades the engine version on the existing instance without creating a new one, preserving the same endpoint, resource ID, and storage volume. Before RDS begins the upgrade, it automatically takes a pre-upgrade snapshot that you can use in case of any issues. An in-place upgrade cannot be canceled once started. For Multi-AZ DB instance deployments, RDS upgrades both the primary and standby instances simultaneously during major version upgrades. If the upgrade fails, RDS attempts an automatic rollback. If you do not have automated backups enabled on your RDS instance, take a manual snapshot as a rollback option before starting the upgrade. Verify that your custom parameter groups and option groups are compatible with the target MySQL version, as deprecated or renamed parameters between versions can cause unexpected behavior post-upgrade. For complete steps, refer to upgrading the MySQL DB engine.

Amazon RDS Blue/Green Deployment

For production environments where minimizing downtime is the top priority, Amazon RDS blue/green deployments are the right choice. Amazon RDS creates the green environment from a snapshot of the blue environment. The feature requires automated backups to be enabled and runs your current production instance (blue) and a fully upgraded staging instance (green) side by side, keeping them synchronized via MySQL binary log replication until you’re ready to switchover. As the storage blocks are being loaded from Amazon Simple Storage Service (Amazon S3), the green environment experiences lazy loading effects. Switchover times have been reduced to under five seconds, delivering near-instantaneous cutover with minimal application impact. For switchover, RDS drops existing connections and blocks new connections, performs a final sync, and automatically renames the green endpoints to match the blue endpoints. This eliminates any application configuration changes. If issues surface after the switchover, you can manually switch back to the blue environment, because the original instance remains running and available throughout. The additional infrastructure cost during the deployment window is the primary trade-off, but for risk-averse production upgrades, the near-zero downtime and immediate rollback capability make blue/green the strongest option available.

Snapshot restore

Snapshot restore is a fully manual process best suited for dev/test environments or scenarios where complete isolation between the old and new versions is required. You take a manual snapshot of the source instance, restore from it while selecting the target MySQL 8.4 version, and the upgrade runs as part of the restore operation. The original instance remains untouched throughout, giving you a clean rollback path. To roll back, redirect traffic back to the original endpoint if the upgraded instance has issues. The downside is that the new restored instance gets a different endpoint, requiring application configuration updates, and read replicas must be manually recreated. Restore and upgrade time for large databases can range from minutes to hours, making this approach impractical for production cutover windows but valuable for pre-production validation.

Rollback after failure to upgrade

For an in-place upgrade you can restore the pre-upgrade snapshot taken by RDS in case of any issues. Perform additional validation to verify that there is no data loss for any transactions committed after the snapshot was taken. Blue/green deployments offer the strongest rollback: the blue environment stays live throughout, and switching back takes seconds making it the right choice for any production upgrade where immediate recovery is a hard requirement. Additionally, you can also set up manual binlog replication from the green environment to the blue environment to minimize data loss after switchover. Snapshot restore sits in the middle: the original instance is unchanged and available, but you’re managing two separate endpoints and must redirect traffic manually. For a detailed breakdown of rollback behavior across all three methods, refer to the AWS documentation on rollback after failure to upgrade. In all three cases, test and practice your rollback procedure in a non-production environment before the production upgrade window. Check detailed rollback steps in this post.

Summary

The following table summarizes the upgrade options available for Amazon RDS for MySQL:

| Method | Downtime | Rollback Capability | Best for |

| In-place upgrade | Minutes to hours (varies by deployment type and DB size) | Limited. Automatic rollback might be possible in some cases; snapshot restore is required | Environments with an acceptable downtime window |

| RDS Blue/Green Deployment | Typically, under 5 seconds (switchover) | Excellent. Immediate switchback to blue environment with no data loss | Production upgrades requiring minimal downtime and fast rollback |

| Snapshot restore | Hours (restore + upgrade time) | Good — original instance unchanged; endpoint change required for rollback | Dev/test validation; isolated upgrade testing before production cutover |

Additional considerations

For Multi-AZ deployments, RDS upgrades both the primary and standby instances simultaneously during the maintenance window, resulting in downtime for the duration of the upgrade process. Blue/green deployments replicate the Multi-AZ configuration in the green environment automatically. If your DB instance uses in-Region or cross-Region read replicas, then you must upgrade all the read replicas before upgrading the source instance. In all cases, review your custom parameter groups against the target MySQL version before initiating the upgrade. Deprecated parameters are a common source of post-upgrade surprises that are easier to catch before the upgrade window than after it.

How Amazon RDS for MySQL performs a major version upgrade

When a major version upgrade is invoked on the console or via the AWS CLI or Amazon RDS API, Amazon RDS automation completes the following steps:

- Verifies if there are any read replicas that need to be upgraded. Read replicas must be upgraded before the primary instance. If you have cross-Region read replicas, upgrade those first, then proceed to upgrade the primary instance.

- Runs mandatory pre-upgrade prechecks. When upgrading from MySQL 8.0 to 8.4, RDS automatically runs prechecks to detect any incompatibilities before the instance is stopped. This step does not cause any downtime. If incompatibilities are found, the upgrade is canceled automatically. The instance remains on MySQL 8.0 with no changes made, and the findings are reported in the

PrePatchCompatibility.logfile. - Takes a pre-upgrade snapshot (if automated backups are enabled on the RDS for MySQL DB instance). This snapshot serves as a recovery point and can be used for rollback purposes if the upgrade encounters any issues.

- Shuts down the instance and prepares it for upgrade. RDS places the DB instance into a maintenance state, stops accepting new connections, and prepares the environment for the binary upgrade.

- Upgrades the MySQL binaries and applies data dictionary changes. RDS upgrades the underlying MySQL binaries from 8.0 to 8.4 and runs the necessary internal upgrade routines to update system tables and validate the data directory against the new version.

- Brings the instance back online running MySQL 8.4, updates the engine version metadata, and resumes normal operations.

A major version upgrade cannot be rolled back automatically once it has completed successfully. This is why testing the upgrade on a non-production copy (using a read replica, a snapshot restore, or a point-in-time restore) is strongly recommended before upgrading your production instance.

Key considerations and upgrade best practices

The following key best practices will help you plan and execute a smooth upgrade from RDS for MySQL 8.0 to 8.4. This is not an exhaustive list.

- Changes in MySQL 8.4 — Before starting, review Changes in MySQL 8.4 to understand behavioral, configuration, and syntax changes. Some important ones are

mysql_native_passwordauthentication plugin being disabled by default, the removal of legacyMASTER/SLAVEterminology, and InnoDB server variable default changes. - Test the upgrade— Restore a snapshot of your production instance and run the full upgrade against it. Validate application behavior and review key metrics in Amazon CloudWatch and CloudWatch Database Insights, as well as the slow query log, before making changes in production. For detailed guidance, see Testing an RDS for MySQL upgrade.

- New custom parameter group — MySQL 8.4 requires a parameter group family of

mysql8.4. Create and configure your new parameter group before the upgrade window, comparing your existing values against the new defaults. Pay particular attention to InnoDB parameters such asinnodb_adaptive_hash_index. See Working with parameter groups. - Review precheck log— RDS runs mandatory prechecks automatically, but run them early on a non-production copy. Download and review the

PrePatchCompatibility.logfile. A non-zero errorCount means the upgrade did not proceed. Resolve all flagged issues before your production window. For more information, refer to RDS prechecks for upgrades. - Upgrade to the latest version — Target the latest minor MySQL 8.4 version to get the most recent security patches and bug fixes. After the upgrade, configure the Auto minor version upgrade setting according to your business requirements.

- Handle read replicas (if any)— Upgrade cross-region read replicas first, then in-region replicas, before upgrading the primary. Multi-AZ standby instances are upgraded automatically. Failing to upgrade replicas first will block the upgrade. See Upgrading a MySQL DB instance.

- Take a manual snapshot— Even though RDS takes an automatic pre-upgrade snapshot when automated backups are enabled, take an explicit manual snapshot immediately beforehand for a clean, known-good restore point. This also speeds up the automated pre-upgrade snapshot RDS takes during the upgrade. See Considerations for MySQL upgrades.

- Blue/Green Deployments for critical workloads — For production workloads where minimizing risk and downtime is a priority, use Amazon RDS Blue/Green Deployments. Upgrade the green environment, validate fully, then switch over in under a minute. The blue environment is retained as a fallback. For detailed guidance, refer to Best practices for Amazon RDS Blue/Green Deployments.

- Monitor RDS recommendations — After the upgrade, check the Recommendations section in the Amazon RDS console regularly for actionable, instance-specific guidance on parameter group adjustments, instance class optimizations, and configuration best practices. Additionally, monitor Database Insights for reviewing any changes with the key metrics.

- Warm the database — The engine restart evicts all InnoDB buffer pool contents. Before routing full production traffic, run your most commonly executed queries to repopulate the buffer pool with your actual working set. Avoid broad SELECT * queries, which can flood the buffer pool with non-representative data.

Conclusion

In this post we reviewed the MySQL 8.0 end of standard support timelines, extended support charges and the options available to perform an upgrade. Upgrade your RDS for MySQL instances to the latest minor version in MySQL 8.4 before July 31, 2026 to avoid extended support costs. For critical workloads with minimal downtime requirements, use the Amazon RDS Blue/ Green Deployments with a detailed implementation guide (Best practices for upgrading RDS for MySQL 8.0 to 8.4 with prechecks, Blue/Green, and rollback). We recommend that you start by testing the upgrade, reviewing your precheck log, and building familiarity with MySQL 8.4 before performing the upgrade on your production environments.

About the authors

Planet for the MySQL Community

Best practices for upgrading Amazon RDS for MySQL 8.0 to 8.4 with prechecks, Blue/Green, and rollback

https://d2908q01vomqb2.cloudfront.net/887309d048beef83ad3eabf2a79a64a389ab1c9f/2026/05/14/DB5315.png

Upgrading a production MySQL database across major versions is one of the most high-stakes operations a database team faces. Compatibility breaks, unexpected downtime, and the risk of no easy rollback can stall upgrade plans for months. Amazon Relational Database Service (Amazon RDS) for MySQL 8.0 standard support ends on July 31, 2026, and Amazon RDS for MySQL now supports long-term support (LTS) version 8.4. Teams running MySQL 8.0 workloads need a reliable, low-risk path to MySQL 8.4. However, upgrading from 8.0 to 8.4 involves significant compatibility changes that you must evaluate and test before proceeding. In this post, you learn how to build a complete upgrade workflow for RDS for MySQL 8.0 to 8.4, covering pre-upgrade compatibility checks, Amazon RDS Blue/Green Deployments for minimal downtime, and a rollback strategy for added protection.

If you need to stay on MySQL 8.0 beyond that date, check the latest updates on RDS Extended Support. For a detailed breakdown of Extended Support costs, see Upgrade strategies for Amazon RDS for MySQL 8.0 to 8.4. You can also consider Amazon Aurora MySQL-Compatible Edition, which provides its own release calendar and still supports MySQL 8.0 without having to use extended support at least until 30 April 2028.

Solution overview

This post is part of a two-part series on upgrading RDS for MySQL 8.0 to 8.4. Here, we focus on the step-by-step implementation, covering pre-upgrade compatibility checks, Blue/Green Deployments, and rollback preparation. For an overview of upgrade strategies, Extended Support costs, and key considerations, see Upgrade strategies for Amazon RDS for MySQL 8.0 to 8.4.

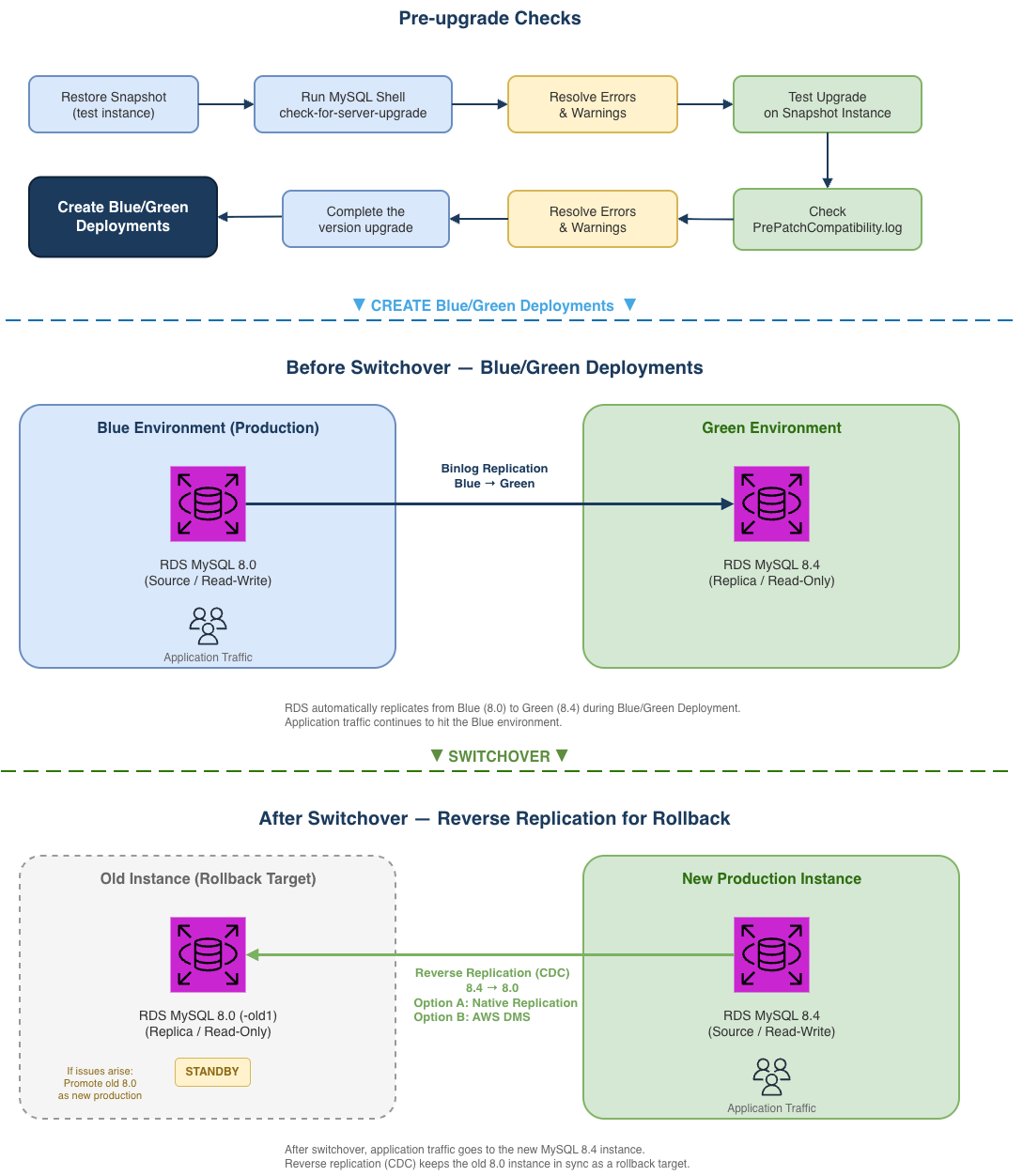

In this post, we walk you through a complete upgrade workflow covering three tightly coupled phases:

- Pre-upgrade checks: Identify incompatibilities using the MySQL Shell upgrade checker utility and RDS built-in validation.

- Blue/Green Deployments: Perform the upgrade with minimal downtime using Amazon RDS Blue/Green Deployments.

- Rollback preparation: Set up reverse replication before switchover so you can roll back with confidence.

These phases are not independent: pre-check results determine whether the green environment can upgrade successfully, the binlog position from Blue/Green Deployments creation feeds into the rollback setup, and you need to make sure the rollback solution is ready before you switchover. The following architecture diagram illustrates the complete workflow, helping you understand how each phase interacts with the others.

Prerequisites

Blue/Green Deployments and RDS for MySQL 8.4 are available in all AWS Regions. Before you begin, make sure you have the following resources in place:

- An Amazon RDS for MySQL 8.0 instance with automatic backups enabled.

- An Amazon Elastic Compute Cloud (Amazon EC2) instance or bastion host in the same VPC for running MySQL Shell.

- A custom DB parameter group created for MySQL 8.4.

- An IAM user or role with at least the following permissions for Blue/Green Deployments:

- Create Blue/Green Deployments

- rds:CreateBlueGreenDeployment

- rds:AddTagsToResource

- rds:CreateDBInstanceReadReplica

- Switchover Blue/Green Deployments

- rds:SwitchoverBlueGreenDeployment

- rds:ModifyDBInstance

- rds:PromoteReadReplica

- Delete Blue/Green Deployments

- rds:DeleteBlueGreenDeployment

- rds:DeleteDBInstance

- Create Blue/Green Deployments

- (For rollback) An AWS Database Migration Service (AWS DMS) replication instance, or network connectivity between the 8.4 and 8.0 instances for native replication. Make sure the security groups associated with both instances allow MySQL traffic on port 3306 between them. If using AWS DMS, see AWS DMS identity-based policy examples for IAM permission best practices.

Phase 1: Pre-upgrade checks

Before upgrading, use the MySQL Shell check-for-server-upgrade utility to identify incompatibilities. Because the utility performs intensive metadata scans across all databases, we recommend running it against a snapshot-restored instance rather than your production database to avoid impacting workload performance. If you are working in a dev/test environment, you can skip the snapshot restore and run the check directly.

To run the pre-upgrade check:

- Restore a DB snapshot of your MySQL 8.0 production instance. This gives you an isolated copy to safely run the check without affecting production.

- Connect to your Amazon EC2 bastion host (or any instance in the same VPC that has MySQL Shell installed). If MySQL Shell is not already installed, see Installing MySQL Shell.

- The upgrade checker utility requires RELOAD, PROCESS, and SELECT privileges. Before running the check, make sure your user account has these privileges. You can use the RDS master user, or grant the required privileges with the following commands:

- Run the upgrade checker:

The utility categorizes findings into three severity levels:

- Error (blocks upgrade).

- Warning (evaluate case-by-case).

- Notice (informational).

Whether an RDS for MySQL instance can successfully upgrade from 8.0 to 8.4 is ultimately determined by the RDS pre-upgrade validation. Therefore, this section focuses on the intersection of MySQL Shell’s check-for-server-upgrade findings and the RDS built-in pre-upgrade checks, providing a practical reference for planning your upgrade (detailed in the following section).

MySQL Shell vs. RDS pre-upgrade checks

The MySQL Shell upgrade checker and the RDS built-in pre-upgrade validation have slightly different default check items. The following table provides the main differences between the two. For detailed explanations and resolution steps for each check item, please see the “Understanding and resolving pre-upgrade check errors” section.

Items with a Source of Shell Only are not enforced by the RDS upgrade validation, so their Severity is marked as -.

| Check item | Source | Severity | Notes |

| Removed system variables | Shell Only | – | Can be ignored. RDS upgrade validation does not enforce this item |

| System variables with new default values | Both | Warning | This check lists parameters whose default values have changed in MySQL 8.4. If you have customized any of these in your MySQL 8.0 parameter group. |

| Issues reported by ‘check table x for upgrade’ command | Both | Varies | Severity depends on the storage engine; handle based on actual precheck output. Typically, if the result shows “Corrupt”, the upgrade checker treats it as an Error item. |

| Checks for foreign keys not referencing a full unique index | Both | Warning | MySQL 8.4 may forbid foreign keys to partial indexes, this check identifies such cases to warn the user. |

| Check for deprecated or invalid user authentication methods. | Both | Warning | MySQL 8.4 may deprecate or remove some authentication methods, this check identifies users still using them. |

| Check for deprecated or removed plugin usage. | Both | Error | RDS does not support these plugins (authentication_fido, keyring_file, keyring_encrypted_file, keyring_oci), so this check is unlikely to be triggered on RDS. If flagged, please verify and remove the plugin. |

| Check for deprecated or invalid default authentication methods in system | Shell Only | – | Can be ignored, RDS upgrade checks ignore this item |

| Check for deprecated or invalid authentication methods in use by MySQL | Shell Only | – | Can be ignored, RDS upgrade checks ignore this item |

| Checks for errors in column definitions | Both | Error | Identifies column definitions that MySQL 8.4 may no longer support. |

| Check for allowed values in System Variables. | Both | Error | Checks whether system variables use values that MySQL 8.4 no longer allows. |

| Checks for user privileges that will be removed | Both | Notice | Informational only. Verifies users with grants that the upgrade process will remove. |

| Checks for partitions by key using columns with prefix key indexes | Both | Error | MySQL 8.4 no longer supports indexes on column prefixes for key partitioning. The partition function ignores these indexes, so MySQL 8.4 no longer allows them. |

| Use of AUTO_INCREMENT for DOUBLE and FLOAT data types | RDS Only | Error | MySQL 8.4 deprecates AUTO_INCREMENT for DOUBLE and FLOAT data types. |

| RDS checked for the use of non-inclusive language in SQL statements. | RDS Only | Error | MySQL 8.4 no longer allows non-inclusive language in SQL statements. |

| memcached plugin needs to be uninstalled before upgrade | RDS Only | Error | MySQL 8.3 no longer supports the InnoDB memcached plugin |

| DB instance must have enough free disk space | RDS Only | Error | Make sure the instance has at least 2 GiB of free storage space. |

| Detect system objects created as tables in sys schema | RDS Only | Error | Remove any user-created tables in the sys schema before upgrading. |

The RDS built-in pre-upgrade validation only runs when you initiate an actual upgrade. If the validation finds blocking issues at that point, the upgrade fails and you need to resolve them and reschedule another maintenance window. Running the MySQL Shell upgrade checker beforehand helps you identify and resolve these issues in advance.

The recommended approach:

- Run the MySQL Shell upgrade checker to resolve the overlapping items. RDS manages shell-only items marked “can be ignored” internally.

- Perform a test upgrade on a snapshot-restored instance to catch the RDS-only items via

PrePatchCompatibility.log.

Understanding and resolving pre-upgrade check errors

To show you the pre-upgrade check process and the Error items that block upgrades, we reproduced several Error items on our test environment. In the following sections, we walk you through the actual precheck output and the suggested resolution for each item, so you can interpret and resolve precheck errors in practice.

Issues reported by ‘check table x for upgrade’ command

Runs CHECK TABLE … FOR UPGRADE against each tables. Depending on the scope of impact, the severity varies. The following are examples of both Error and Warning types.

|

Check item |

Issues reported by ‘check table x for upgrade’ command

|

|

Description |

The View references a table, column, or function that no longer exists, or the definer/invoker lacks the required privileges. The “Corrupt” status indicates this is an Error-level finding that blocks the upgrade. |

|

Remediation |

Identify the invalid references and remove or fix them:

|

|

Check item |

Issues reported by ‘check table x for upgrade’ command

|

|

Description |

The table uses COMPACT or REDUNDANT row format, where each BLOB/TEXT column stores a 768-byte prefix inline. With many such columns, the total row size exceeds InnoDB’s 8126-byte page limit. |

|

Remediation |

Changing some columns to TEXT or BLOB or using ROW_FORMAT=DYNAMIC or ROW_FORMAT=COMPRESSED may help. This is a Warning item and does not block the upgrade, but INSERT or UPDATE operations that exceed the row size limit may fail after the upgrade. |

Check for deprecated or removed plugin usage

|

Check item |

Check for deprecated or removed plugin usage.

|

|

Description |

RDS for MySQL does not support installing the plugins that the precheck scans for (authentication_fido, keyring_file, keyring_encrypted_file, keyring_oci), so this item cannot be reproduced. If detected, remove the reported plugin(s) and retry the upgrade. |

|

Remediation |

Uninstall the plugin(s) if found. |

Checks for errors in column definitions

|

Check item |

Checks for errors in column definitions. Identifies column definitions that may not be supported in future versions of MySQL.

|

|

Description |

Using DOUBLE or FLOAT with AUTO_INCREMENT is no longer allowed in MySQL 8.4. This check and “Use of AUTO_INCREMENT for DOUBLE and FLOAT data types” point to the same affected tables. |

|

Remediation |

Change the column type to an integer type (for example: BIGINT). |

Check for allowed values in system variables

|

Check item |

Check for allowed values in system variables. The following system variables are using values that are not allowed.

|

|

Description |

Checks whether system variables use values that are no longer allowed in MySQL 8.4. |

|

Remediation |

Find non-default system variables and change the value(s). |

Checks for partitions by key using columns with prefix key indexes

|

Check item |

Checks for partitions by key using columns with prefix key indexes. Indexes on column prefixes are not supported for key partitioning, they are ignored by the partition function and so they are not allowed as of 8.4.0. This check identifies tables with partitions defined this way, they should be fixed before upgrading to 8.4.0. More information: Partitioning limitations

|

|

Description |

Tables partitioned by key using columns with prefix key indexes are no longer compatible in MySQL 8.4. |

|

Remediation |

If the partition key column uses a prefix index (for example: KEY(col(10))), remove the prefix and use the full column, or redesign the partition strategy (for example: switch to RANGE or HASH). |

Use of AUTO_INCREMENT for DOUBLE and FLOAT data types

|

Check item |

Use of AUTO_INCREMENT for DOUBLE and FLOAT data types. Starting with MySQL version 8.4.0, the use of AUTO_INCREMENT is deprecated for DOUBLE and FLOAT data types.

|

|

Description |

This is the RDS-specific check for the same issue as “Checks for errors in column definitions”. Both checks point to the same affected tables. |

|

Remediation |

Same as “Checks for errors in column definitions” — change the column type to an integer type. |

RDS checked for the use of non-inclusive language in SQL statements

|

Check item |

RDS checked for the use of non-inclusive language in SQL statements. Starting with MySQL version 8.4.0, the use of non-inclusive language in SQL statements isn’t allowed.

|

|

Description |

Starting with MySQL 8.4.0, non-inclusive language (for example: MASTER, SLAVE) in SQL statements is no longer allowed. |

|

Remediation |

Replace non-inclusive keywords:

|

memcached plugin needs to be uninstalled before upgrade

|

Check item |

memcached plugin needs to be uninstalled before upgrade. Starting with MySQL version 8.3.0, the InnoDB memcached plugin is no longer supported.

|

|

Description |

The InnoDB memcached plugin is no longer supported starting with MySQL 8.3.0. Remove it before upgrading. |

|

Remediation |

Remove the MEMCACHED option from the Option Group associated with the DB instance. |

DB instance must have enough free disk space

|

Check item |

DB instance must have enough free disk space. The DB instance must have at least 2 GiB free space for an upgrade to MySQL 8.0.30 and above.

|

|

Description |

The DB instance must have at least 2 GiB of free disk space for upgrades to MySQL 8.0.30 and above. |

|

Remediation |

Scale up the storage so that the instance has more than 2 GiB of free storage space (FreeStorageSpace). |

Detect system objects created as tables in sys schema

|

Check item |

Detect system objects created as tables in sys schema.

|

|

Description |

Detects whether system objects in the sys schema have been created as regular tables. These objects can conflict during the upgrade process. |

|

Remediation |

If results are returned: |

Key compatibility changes in MySQL 8.4

The following changes most commonly affect RDS for MySQL upgrades:

mysql_native_password deprecation: MySQL 8.4 defaults to caching_sha2_password. Existing users with mysql_native_password continue to work after the upgrade, but newly created users will use caching_sha2_password. To change the default authentication plugin, modify the authentication_policy parameter in your DB parameter group. If you do not plan to keep using mysql_native_password, consider switching affected users to caching_sha2_password on your MySQL 8.0 instance and validating application connectivity before proceeding with the upgrade.

Applications using older client drivers that do not support caching_sha2_password will fail to connect with the error: Authentication plugin ‘caching_sha2_password’ cannot be loaded. The following minimum driver versions are required:

Query affected users:

restrict_fk_on_non_standard_key: New parameter in MySQL 8.4 (default ON) that blocks CREATE TABLE and ALTER TABLE statements from creating foreign keys on non-unique or partial keys. This does not affect existing foreign keys or the upgrade itself. It only applies to data definition language (DDL) operations after the upgrade. If your application creates or modifies foreign keys at runtime, set this parameter to OFF in your MySQL 8.4 parameter group, or adjust your DDL statements accordingly.

memcached plugin removal: If enabled in your option group, disable it before upgrading (the RDS pre-upgrade validation can also flag this as an Error).

Parameter default value changes in MySQL 8.4

Beyond the compatibility changes above, MySQL 8.4 also changes the default values for several system variables. If you have explicitly set some of these in your MySQL 8.0 parameter group, verify whether your values need to carry over to the MySQL 8.4 parameter group. If you are using the engine defaults, be aware of the new behavior. The following table only includes parameters that are modifiable in the RDS for MySQL 8.4 parameter group.

| Parameter | 8.0 default | 8.4 default |

| group_replication_consistency | EVENTUAL | BEFORE_ON_PRIMARY_FAILOVER |

| group_replication_exit_state_action | READ_ONLY | OFFLINE_MODE |

| innodb_adaptive_hash_index | ON | OFF |

| innodb_buffer_pool_instances | 8 (or 1 if innodb_buffer_pool_size < 1 GB) | MAX(1, #vcpu/4) |

| innodb_change_buffering | all | none |

| innodb_io_capacity | 200 | 10000 |

| innodb_io_capacity_max | 200 | 2 × innodb_io_capacity |

| innodb_numa_interleave | OFF | ON |

| innodb_page_cleaners | 4 | innodb_buffer_pool_instances |

| innodb_parallel_read_threads | 4 | MAX(#vcpu/8, 4) |

| innodb_read_io_threads | 4 | MAX(#vcpu/2, 4) |

You can check which parameters have been explicitly modified in your current parameter group:

For the complete list of changes, see Amazon RDS for MySQL LTS version 8.4 is now generally available.

Go/no-go checklist

Before proceeding to Phase 2, confirm:

Phase 2: Blue/Green Deployments (minimize downtime)

Amazon RDS Blue/Green Deployments lets you upgrade the major engine version, without affecting production. When you’re ready, you promote the staging environment to become the new production database with downtime typically five seconds or lower for single-Region configurations. This makes Blue/Green Deployments a practical choice for major version upgrades with minimal disruption. The following sections walk you through creating Blue/Green Deployments, upgrading the green environment to MySQL 8.4, and performing the switchover.

Prechecks

Before creating the deployment, confirm:

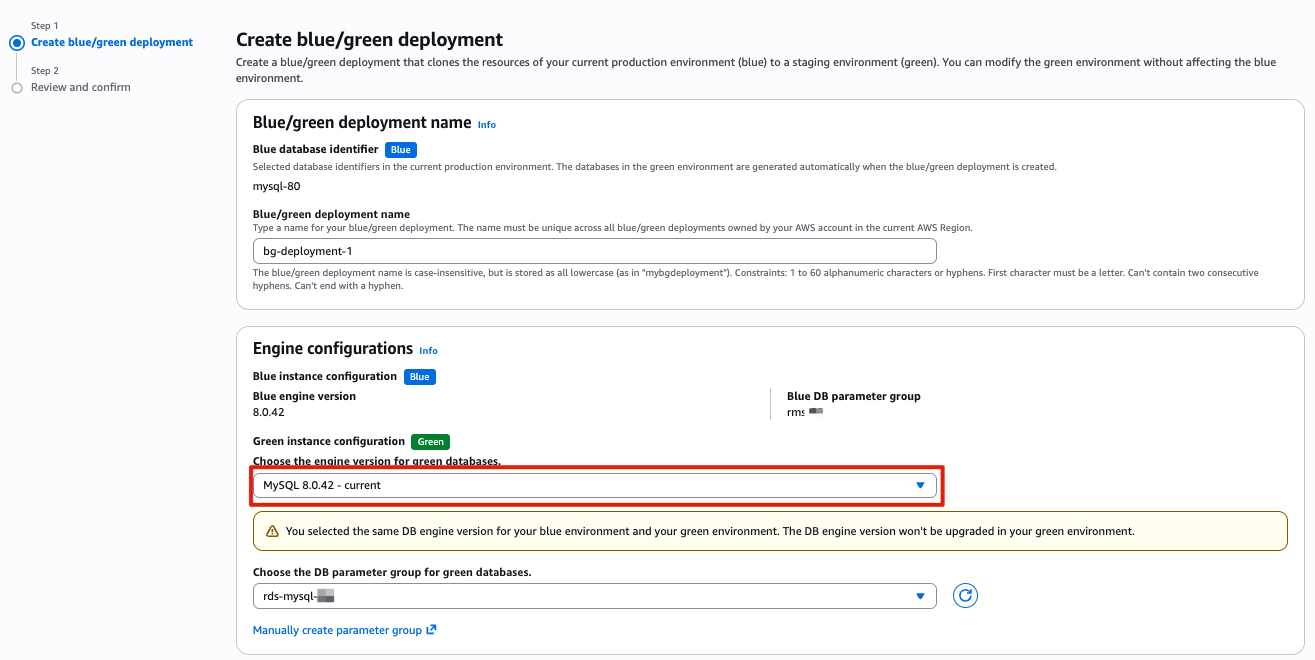

Create and upgrade the green environment

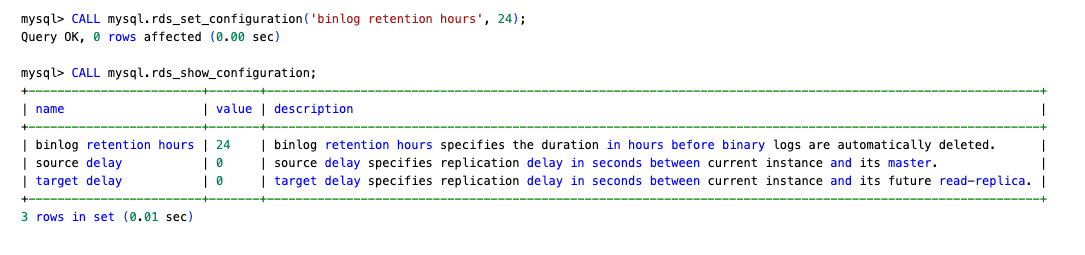

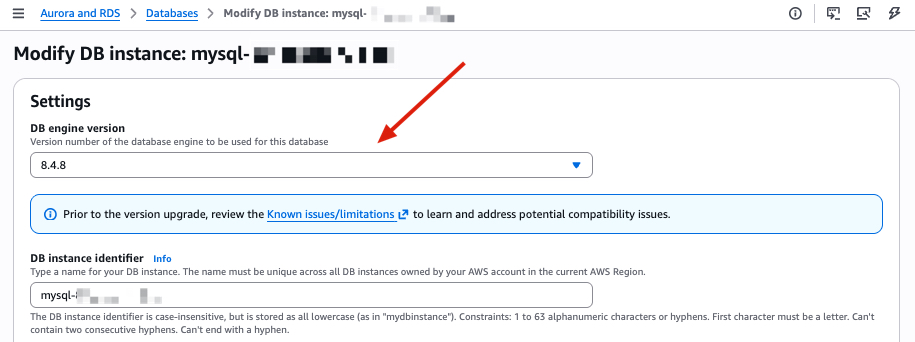

- Create the Blue/Green Deployments: Select the same engine version as the source instance initially. This avoids a failed automatic upgrade requiring you to rebuild the entire deployment.

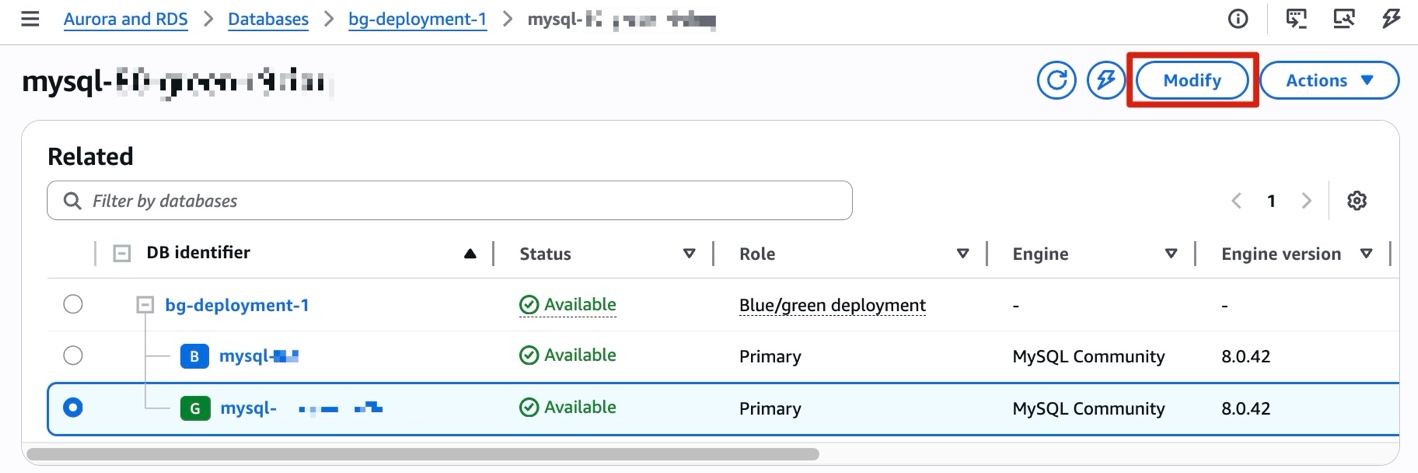

- After creation completes, manually upgrade the green instance to MySQL 8.4.x. Select your pre-configured MySQL 8.4 parameter group, or the default parameter group if you’re not customizing any parameters.

- If the upgrade fails, check the green instance’s

PrePatchCompatibility.logand Recent events for details. Resolve the issues and retry. - (Optional) If you plan to set up a rollback path in Phase 3, verify that binlog retention on the green instance (MySQL 8.4) is set to at least 24 hours before switchover. Otherwise, binlog files might be purged after switchover, making reverse replication impossible.

Switchover

Before switching over:

- Confirm

ReplicaLagon the green instance is near zero. - Check for long-running queries on the blue instance (

SHOW PROCESSLIST). - Verify both environments show Available status.

- Verify that the application isn’t caching DNS, or if it is then the cache TTL is 5 seconds or less.

- Complete the rollback setup in Phase 3 first

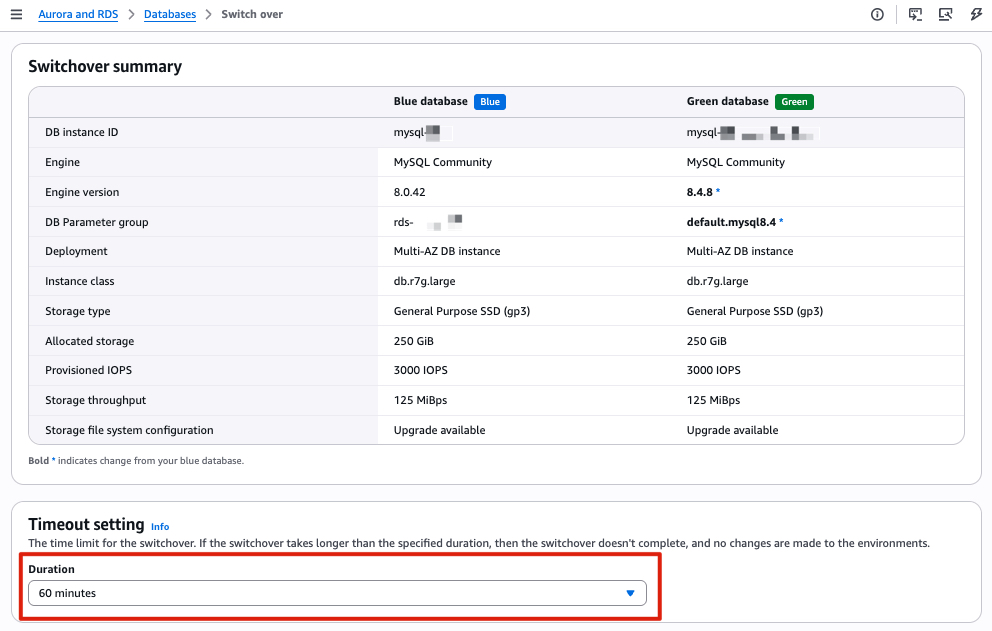

Set the switchover timeout to your acceptable downtime window (maximum 60 minutes). During switchover, existing connections are dropped.

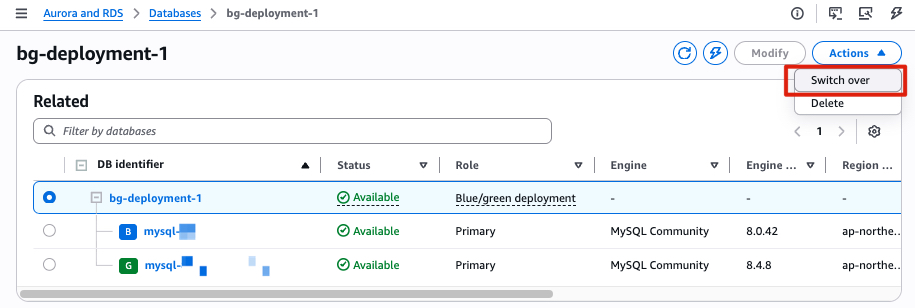

After a successful switchover, RDS renames the old instance with a -old1 suffix and sets it to read-only. The new MySQL 8.4 instance takes the original name and endpoint. RDS automatically disconnects replication at this point. Blue/Green Deployments is a one-time operation. You can delete the Blue/Green Deployments at your convenience; doing so does not delete the old instance. If you do not need a rollback path, the upgrade is complete at this point.

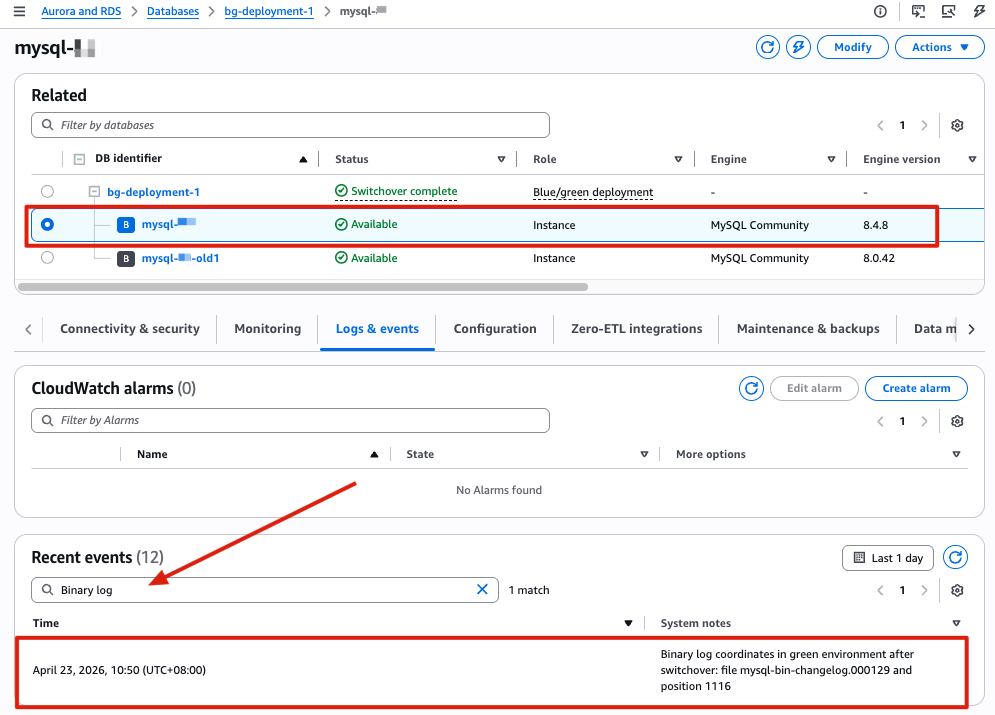

Important: Note the binlog position recorded after switchover completed, you will need it for the rollback setup in Phase 3. Following these steps to find the binlog position after switchover:

- Open the Amazon RDS console and select your new MySQL 8.4 instance.

- Open the Logs & events tab and search for Binary log. Note the last recorded binlog file name and position. For example, in our test environment, the values are

mysql-bin-changelog.000129and position1116.

Phase 3: Rollback preparation

Set up reverse replication from the new MySQL 8.4 instance to the old MySQL 8.0 instance after switchover, so you have a working rollback path if issues arise post-upgrade. This approach uses change data capture (CDC) to synchronize only incremental changes. The key input is the binlog position recorded in the switchover event (Logs & events → Recent events on the new MySQL 8.4 instance). Use this position to establish reverse replication back to the old 8.0 instance.

The following table provides a brief comparison between Option A (native replication) and Option B (AWS DMS) to help you choose the approach that best fits your environment:

| Criteria | Native Replication | AWS DMS |

| Setup complexity | Lower | Higher |

| Additional cost | None | DMS instance cost |

| LOB handling | Automatic | Requires DMS task configuration |

| When to use | Direct network connectivity available | Complex environments or when native replication isn’t feasible |

Option A: Native replication (preferred)

Migration between RDS for MySQL instances is a homogeneous data migration. We recommend native replication as the preferred approach, because native database migration tools generally provide better performance and accuracy compared to AWS DMS.

After switchover, you do not need to change the read_only parameter to 0 on the MySQL 8.0 -old1 instance. In replication setups, it can be useful to enable read_only on replica servers to make sure that replicas accept updates only from the source server and not from clients.

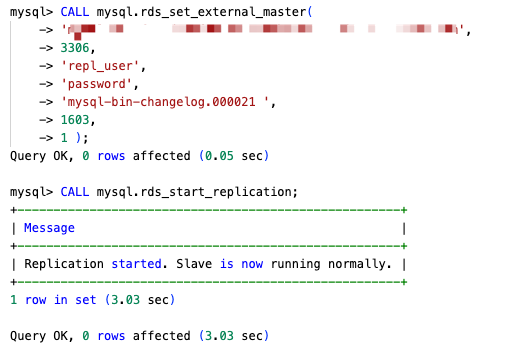

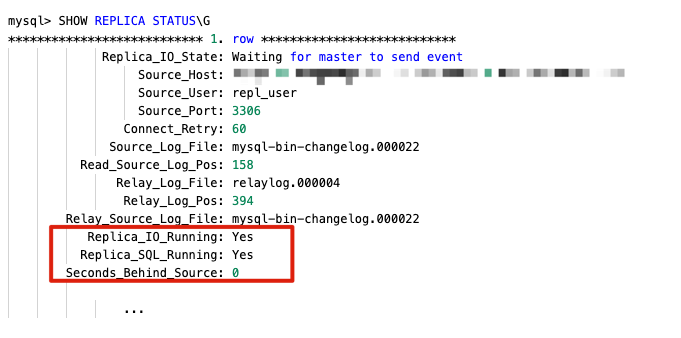

- Retrieve the binlog position from the new MySQL 8.4 instance (Logs & events → Recent events).

- On the MySQL 8.4 instance, create a replication user.

- On the MySQL 8.0

-old1instance, configure replication using the binlog position from step 1:

- Verify with

SHOW REPLICA STATUS\Gand confirm the following:- Replica_IO_Running: Yes

- Replica_SQL_Running: Yes

- Seconds_Behind_Master is decreasing, approaching 0, and is not -1 or NULL

Option B: AWS DMS

If native replication is not suitable for your environment, you can use AWS DMS as an alternative to set up the rollback path.

Because the MySQL 8.0 -old instance is set to read-only after switchover, change the read_only parameter to 0 in its parameter group before creating the task.

- Step 1: Create a DMS replication instance

- Instance class & Allocated storage: based on workload

- Engine version: use the default

- Virtual private cloud (VPC): use the same VPC as both the MySQL 8.4 (source) and MySQL 8.0 (target) instances. Note: If the source and target instances are in different VPCs, you need to configure VPC peering or another network connectivity option before creating the replication instance.

- Step 2: Create DMS endpoints

- Source endpoint: use the MySQL 8.4 instance endpoint (after switchover)

- Target endpoint: use the old MySQL 8.0

-old1instance endpoint - Test both endpoint connections from the replication instance before proceeding

- Step 3: Create a CDC-only DMS task

- Migration type: CDC only (ongoing replication)

- CDC start position: enter the binlog position from the switchover event (Logs & events → Recent events on the new MySQL 8.4 instance)

- Include LOB columns in replication: if your tables contain LOB columns, consider using Limited LOB mode for faster replication. This mode truncates LOB value that exceeds the configured Maximum LOB size, so you need to set it appropriately.

- Maximum LOB size (KB): query the maximum LOB size in your instance first, then set accordingly:

- Table mappings: either explicitly include the databases you want to synchronize, or use

%to include every database and exclude system databases (mysql, sys, information_schema, performance_schema). - Step 4: Monitor the task

- Once the task status shows Replication ongoing, CDC synchronization is active and the rollback path is ready.

Once you have validated that the new RDS for MySQL 8.4 environment is working as expected, you can stop the DMS replication task and delete the old RDS for MySQL 8.0 instance.

Clean up

After completing the upgrade and confirming your application is running normally on MySQL 8.4, you should remove the resources created during this process to avoid incurring additional charges. Follow these steps in the AWS Management Console or the AWS Command Line Interface (AWS CLI):

- Deleting Blue/Green Deployments (deleting the deployment does not delete the DB instances).

- Open the Amazon RDS console and choose Databases.

- Select the Blue/Green Deployment → Choose Actions → ‘Delete’.

- Snapshot-restored RDS instance used for pre-upgrade checks.

- The old RDS for MySQL 8.0

-old1instance (after you confirm rollback is no longer needed). For more AWS CLI options, see Deleting a DB instance.- Open the Amazon RDS console and choose Databases.

- Select the instance → Choose ‘Actions’ → ‘Delete’.

- Amazon EC2 instance used for running prechecks (if applicable). For more AWS CLI options, see Terminate Amazon EC2 instances.

- Open the Amazon EC2 console and choose Instances.

- Select the EC2 instance used for running prechecks → Choose ‘Instance state’ → ‘Terminate (delete) instance’.

- AWS DMS replication instance, endpoints, and task (if you used Option B for rollback).

- Tasks:

- Open the AWS DMS console and choose Tasks.

- Select the DMS task used for rollback.

- If the task is still running, choose ‘Actions’ → ‘Stop’.

- After the task has stopped, choose ‘Actions’ → ‘Delete’.

- Endpoints:

- Choose Endpoints.

- Select the source and target endpoints → Choose ‘Actions’ → ‘Delete’.

- Deleting a replication instance:

- Choose Provisioned instances.

- Select the replication instance → Choose ‘Actions’ → ‘Delete’.

- Tasks:

Conclusion

In this post, we walked through a three-phase approach for upgrading Amazon RDS for MySQL 8.0 to 8.4: interpreting pre-upgrade compatibility checks, performing the upgrade with minimal downtime using Amazon RDS Blue/Green Deployments, and setting up a rollback path with reverse replication. These phases give you a tested, reversible upgrade path with minimal downtime, reducing the risk that typically stalls major version upgrades. With MySQL 8.0 standard support ending on July 31, 2026, we encourage you to start planning your upgrade and validating your workloads against MySQL 8.4’s new features and performance improvements. If you need to continue using MySQL 8.0 beyond the standard support end date, see the breakdown of Extended Support costs in Upgrade strategies for Amazon RDS for MySQL 8.0 to 8.4.

To learn more, see Upgrading a MySQL DB instance engine version and Using Amazon RDS Blue/Green Deployments. If you have questions or feedback, leave a comment on this post.

About the authors

Planet for the MySQL Community

Please enjoy this new video of college students trying to find Iran on a map

https://media.notthebee.com/articles/6a04dc6fb64dc6a04dc6fb64dd.jpg

I know everyone is an expert on Iran – and the Middle East in general – these days, so obviously college graduates are able to point out Iran on a map, right?

Not the Bee